The Shift from Screen to Interactive Experience Space

Image: Glasses-free 3D display (stereo display by Alioscopy), photographed at Laval Virtual 2026 by Ulrich Buckenlei | © Ulrich Buckenlei

Displays are undergoing a fundamental transformation. Content is no longer presented as a flat surface, but experienced as space. Technologies such as eye tracking, artificial intelligence, and real-time processing are merging into interactive systems that elevate processes, decisions, and collaboration to a new visual level.

What was long considered a screen is now evolving. Displays respond to movement, perspective, and gaze direction, creating a spatial effect without the need for additional devices. Content no longer appears on a surface, but seems physically anchored in space.

This development is not a single technological leap. It emerges from the interaction of multiple systems. Artificial intelligence, real-time processing, and new display architectures converge to enable stable, interactive 3D visualizations in operational use. This becomes especially relevant wherever multiple people work with a system simultaneously and make decisions together.

New approaches already demonstrate that displays can recognize two users in parallel and calculate individual perspectives. Each viewer sees their own correctly aligned 3D image. This creates a new form of digital interaction that is no longer tied to individual devices, but functions as a shared visual space.

For companies, this results in clear added value. Information becomes spatially tangible. Products can be evaluated realistically, data becomes more intuitive, and complex relationships are easier to understand. As a result, decisions become more informed and collaborative.

From Screen to Spatial Interface

The traditional logic of screens was long clear. Content was displayed in two dimensions and independently of the viewer. Interaction took place via mouse, touch, or controller. This very paradigm is now dissolving.

Modern displays detect the user’s position and dynamically adapt content. Perspectives change in real time, depth information is calculated correctly, and visual elements respond to movement. This does not just create a better image, but an entirely new understanding of interaction.

This shift becomes particularly evident in systems with integrated eye tracking. They precisely capture gaze direction and align content accordingly. Combined with artificial intelligence, adaptive systems emerge that do not just display, but interpret and react based on context.

This development can be summarized in three key changes:

- Displays become perspective-based and user-dependent instead of static

- Interaction shifts from input to spatial perception

- Multiple users can see different content simultaneously

This creates a new category of interfaces. The screen is no longer understood as a device, but as a space. Content is no longer flat, but has depth, position, and context.

Concept visualization of a glasses-free 3D display with eye tracking, AI-supported rendering, and directional light control for spatial perception in a multi-user context

Graphic: Visoric Research based on current developments in spatial display and eye tracking technologies

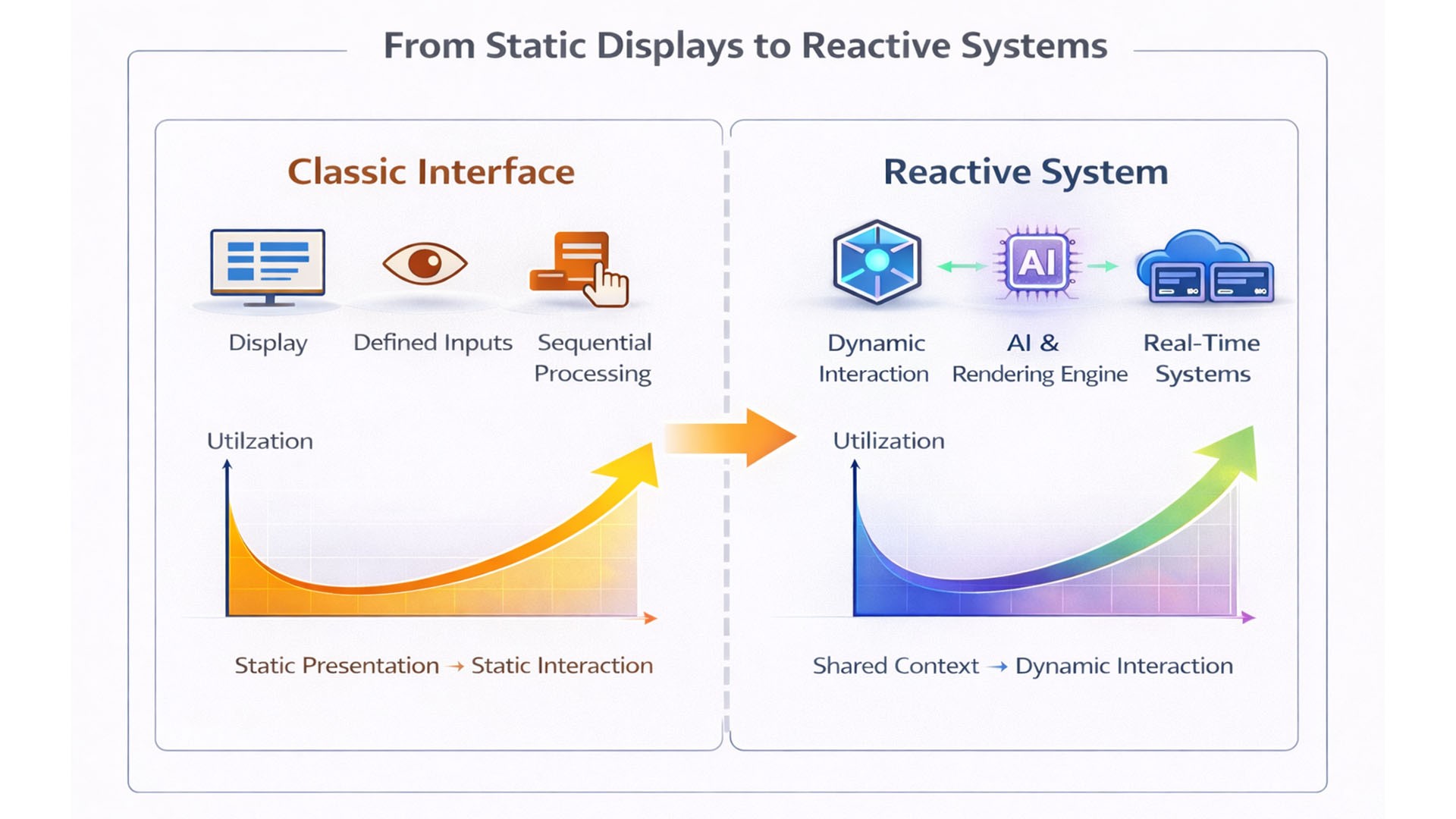

The graphic illustrates the structural difference between traditional display systems and reactive, AI-supported systems. On the left, the traditional logic is visualized. Content is displayed independently of the user, inputs are made via clearly defined interfaces, and processing follows sequential workflows. The screen functions as a static output medium that does not adapt to the specific usage context.

On the right, a reactive system is contrasted. Here, visualization is no longer based on fixed content, but on continuous computation. Interaction no longer occurs solely through explicit input, but implicitly through movement, gaze direction, and situational context. An AI-supported rendering engine processes these signals in real time and generates dynamic visualizations that continuously adapt.

Central to this is the shift from isolated usage to shared context. While traditional systems address individual users, reactive displays enable simultaneous, perspective-correct visualization for multiple viewers. A shared visual space emerges where information can not only be viewed, but jointly interpreted and discussed.

The usage curves shown in the lower section also highlight this difference. Traditional systems reach their functional limits relatively early, as interaction and understanding remain tied to fixed structures. Reactive systems, on the other hand, unfold their value over time. With increasing interaction, their benefit grows, as content adapts dynamically and complex relationships become more accessible.

The graphic thus does not describe a linear progression, but a fundamental paradigm shift. The screen evolves from a passive output medium into an active system that connects perception, interaction, and context.

However, this transformation does not originate from the visualization itself. It is based on a technological foundation where multiple systems interact precisely. Only the combination of sensing, intelligent processing, and modern display architecture enables real-time adaptation and spatial experience.

This shifts the focus from the visible surface to the underlying conditions. The next chapter therefore examines not the application, but the foundation. The key question is which technological developments enable this new type of display system and why the conditions are met right now.

The Technological Foundations of Spatial Displays

Spatial displays are not created by a single innovation, but by the interaction of several technological developments. For a long time, individual components already existed, but not with the required quality, speed, and integration. Only now are they reaching a level of maturity that enables stable, interactive systems.

At the core are three layers: sensing, processing, and visualization. What matters is not their existence, but their simultaneous performance. Only when all three operate precisely and in real time can a system respond to users, perspective, and context.

Sensing has made a decisive leap forward. Eye tracking systems today are significantly more precise and stable than just a few years ago. They continuously deliver data on gaze direction and position, forming the basis for spatial adaptation.

At the same time, processing has fundamentally changed. Modern GPUs and specialized AI systems enable large volumes of data to be analyzed with minimal latency and multiple perspectives to be calculated simultaneously. Without this real-time capability, spatial visualization would appear unstable or unusable.

Visualization has also advanced. Improvements in light control and display architecture make it possible to direct different image information into different viewing directions. This enables robust, glasses-free 3D visualization, even in multi-user scenarios.

This development can be summarized in three key prerequisites:

- Precise sensing continuously captures user position and gaze direction

- Real-time processing enables parallel calculation of multiple perspectives

- Modern displays control light directionally for spatial perception

System convergence of tracking, real-time processing, and display technology as the foundation for spatial, interactive systems

Graphic: Editorial analysis | Visualization: © Ulrich Buckenlei | Visoric GmbH

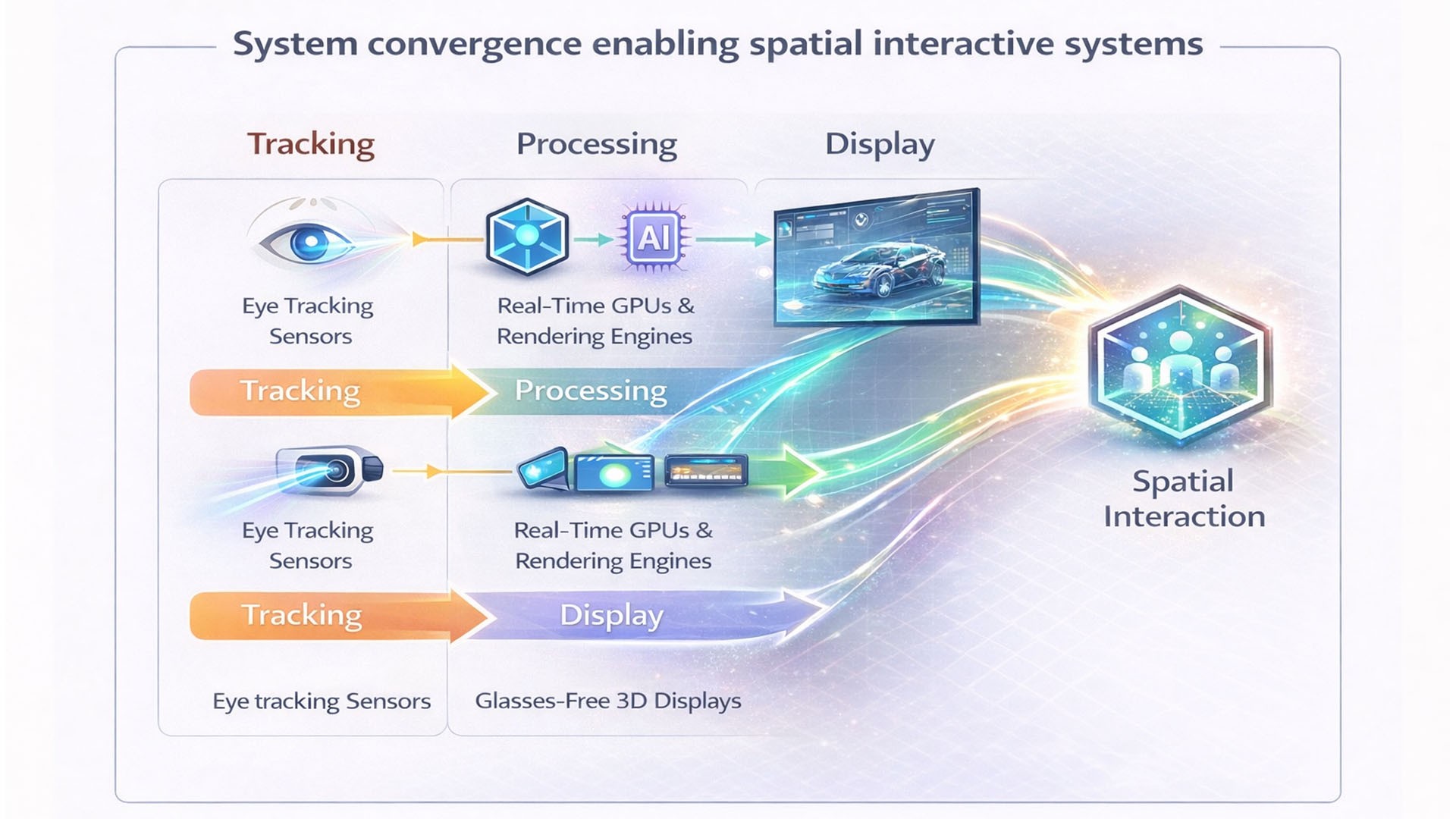

The graphic illustrates this convergence. On the left, individual technological building blocks are shown, which existed separately for a long time. Sensing, processing, and visualization evolved independently and could only be combined to a limited extent.

At the center, the key transformation becomes visible. Systems are no longer separate, but tightly interconnected. Data is captured, processed, and translated into visualization in real time, creating a continuous loop where perception and reaction are inseparable.

On the right, the result of this integration is visible. Instead of isolated functions, a coherent system emerges that dynamically adapts to the user. Visualization is no longer predefined, but continuously computed and contextually adjusted.

This shows that the current development step is not based on a single innovation, but on the simultaneity of multiple technological maturity processes. Only this convergence enables stable spatial interaction.

This leads to the next key question. If the technological prerequisites are met, where do practical applications emerge and what value do they provide in real operations.

This question can only be answered through real systems. Only in practical implementation does it become clear how these technological developments translate into real interaction.

The following chapter examines one of the first concrete implementations. The focus is on glasses-free 3D displays with integrated eye tracking, which already demonstrate how spatial visualization, real-time processing, and user-dependent perspectives combine into a functional system.

This example shows how technological convergence leads to practical applications and what new possibilities arise for perception, collaboration, and decision-making.

Why Spatial Displays Are Becoming Reality Now

The technologies described are not new. Eye tracking, real-time rendering, and directional light control have existed for years. Yet they have not been widely adopted. The key difference lies not in their existence, but in their current maturity and integration.

Only now are multiple developments reaching a point where they can reliably work together. Computing power is available, sensing is precise and stable, and AI systems can interpret complex data in real time. This simultaneity fundamentally changes the starting point.

A central factor is modern hardware. GPUs and specialized chips now enable parallel calculation of multiple perspectives without noticeable delay. At the same time, these systems have become more compact and cost-efficient, making their use outside research labs possible.

On the software side, a major shift has also occurred. Real-time engines and AI models can now process large data streams reliably and translate them into adaptive visualization. This creates systems that no longer react statically, but continuously adapt to the user.

Another driver is rising expectations of interaction. Users are accustomed to dynamic, context-aware systems. Interfaces that do not respond feel increasingly inadequate. Spatial visualization is therefore not just a technological development, but also a logical consequence of changing user expectations.

This can be summarized in three key drivers:

- Computing power enables stable real-time processing of complex data

- Sensing provides precise and continuous information about users and context

- AI systems connect data, interpretation, and visualization into adaptive interfaces

Convergence of computing power, sensor precision, and artificial intelligence as the foundation for the transition from experimental systems to real spatial interfaces

Graphic: Visoric Research on technological maturity and integration of spatial display systems

The graphic shows the temporal evolution of these technologies. While individual components were available early on, they did not reach the required performance for stable applications. Only with the simultaneous rise of computing power, sensor precision, and AI processing does a range emerge where these systems function reliably.

This becomes particularly clear in the overlap of the curves. The point where they intersect marks the transition from experimental approaches to operational systems. This is exactly where spatial displays are today.

This explains why this technology is entering the market relatively abruptly. It is not a single innovation, but the result of technological synchronization.

This synchronization creates the basis for real applications. Only now can systems emerge that are stable enough for everyday use and deliver real value.

The next chapter therefore examines one of these applications in detail.

Immersive Display Spaces as the Next Stage of Spatial Interfaces

Now that the technological foundations have been described, it becomes clear how these developments manifest in concrete systems. Immersive display spaces are among the first applications in which spatial interfaces are no longer merely simulated, but become physically experienceable.

Unlike traditional screens, these are not individual displays, but connected visual environments. Walls, floors, and in some cases additional surfaces are combined into one continuous projection or LED surface. This creates not a limited field of view, but a walkable visual space.

The visualization is based on continuously calculated perspectives that dynamically adapt to the users’ position and movement. Content reacts in real time to location within the space and creates a stable spatial impression.

What is especially relevant is the physical expansion of the display surface. By integrating floor and wall areas, a visual continuity emerges that fully surrounds the user. Perspectives change as people move through the space, creating an immersive experience without requiring additional devices such as headsets.

These systems enable a new form of multi-user interaction. Several people can move through the space at the same time, perceive content from different viewpoints, and interpret it together. The focus shifts from individual display toward a shared visual experience.

- Connected display surfaces replace individual screens

- Spatial visualization is created through movement in space rather than through devices

- Multiple users interact simultaneously within the same visual environment

Immersive display space with connected wall and floor surfaces enabling spatial interaction without headsets

Motif: Immersive display space with continuous floor and wall visualization | Image: © Ulrich Buckenlei

The illustration shows such a system in practical use. At the center is not a single display, but a spatially extended visualization that spans multiple surfaces and visually surrounds the user.

In the upper area, it becomes clear that sensing and tracking play a central role. Cameras continuously capture the position of users in the room and enable dynamic adaptation of the content.

In the middle area, processing takes place. A real-time system continuously calculates the appropriate perspective for the current position in the room. As a result, the visualization remains stable and coherent, even during movement.

In the lower area, the true strength of these systems becomes visible. By combining multiple synchronized display surfaces, a visual continuity is created that gives the impression of one connected space.

What stands out is the change in interaction. Users no longer move in front of a screen, but within a visual system. Movement and position replace traditional inputs and become the central control layer.

This creates a new form of digital interface. The screen becomes the environment, and information is no longer merely shown, but experienced as space.

At the same time, a key technological limitation becomes visible. While immersive spaces are excellent for shared experiences, they do not offer individually optimized perspectives for each user.

This is exactly where glasses-free 3D displays with eye tracking come into play. They make it possible to calculate and output different, perspective-correct visual content for several people at the same time.

The following chapter shows how these systems work and how eye tracking, real-time processing, and directional light output create an individual spatial perception for multiple users simultaneously.

How Glasses-free 3D Displays with Eye Tracking Create Spatial Perception

After immersive display spaces have been described as spatial interfaces, it now becomes clear how individual spatial perception is technically realized. Glasses-free 3D displays with eye tracking make it possible to generate different perspective-correct visual content for multiple users at the same time.

At the center is not a static image, but a dynamic process of capture, calculation, and targeted image output. The visual representation only emerges through the interaction of several system components working together in real time.

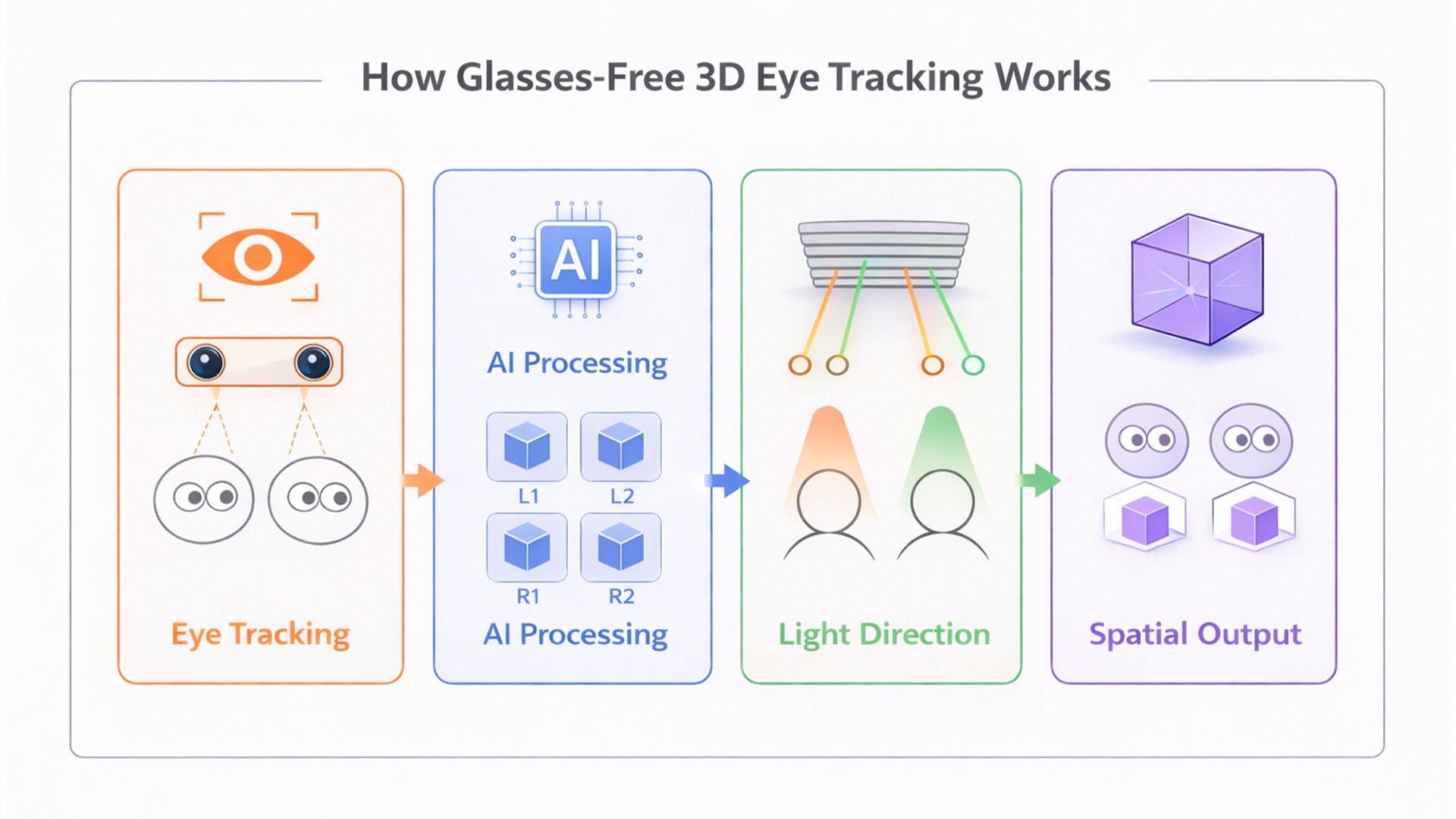

The first step is precise user position tracking. Cameras and sensors continuously determine the position and gaze direction of the eyes. This data forms the basis for every further calculation.

Based on this, processing takes place. A real-time system calculates an individual perspective of the displayed object for each user. Several image variants are generated in parallel, each precisely matched to the respective position in the room.

In the next step, the visualization is controlled. The system directs light into different directions in a targeted manner, so that each user only perceives the perspective calculated for them. As a result, several people see different images on the same display surface at the same time.

Only through this final step does the spatial impression emerge. The brain interprets the individually aligned visual information as a three-dimensional structure that appears stably anchored in space.

- Eye Tracking → Capture of each user’s position and gaze direction

- Real Time Processing → Calculation of individual perspectives in real time

- Directional Light → Targeted alignment of image information for each user

- Spatial Output → Stable spatial perception without additional devices

How glasses-free 3D eye tracking systems work, from gaze tracking through AI-supported processing and light control to spatial visualization for multiple users at the same time

Graphic: Functional principle of a glasses-free 3D display with multi-user rendering | Image: © Ulrich Buckenlei

The illustration shows this process in four clearly separated steps. On the left side, the capture stage is shown. Cameras recognize multiple users at the same time and precisely determine the position of their eyes.

In the second step, processing takes place. Separate image information is calculated for each recognized person. What is crucial here is the parallel calculation of multiple perspectives, which must happen synchronously and with minimal latency.

In the third step, it becomes visible how the visualization is implemented technically. The display sends different light rays in different directions. Each user receives only the part of the image information intended for their position.

On the right side, the result emerges. Multiple people see a spatial object at the same time, even though they are looking at the same physical surface. Each perspective is individually correct, creating a stable three-dimensional impression.

What stands out is that these systems combine individual perception with shared use. Each user gets their own view, while shared interaction in the same space remains possible.

This creates a new quality of visual systems. Content is no longer simply displayed, but individually calculated for each viewer while remaining experienceable in a shared context.

For companies, this opens new possibilities in areas such as product development, simulation, and decision making. Complex content can be viewed from multiple perspectives at the same time and directly evaluated together.

The following chapter therefore examines which technical requirements are needed to integrate these systems into existing infrastructures and deploy them at scale.

Integration, Scaling, and Organizational Requirements

As spatial displays reach increasing maturity, the question is no longer whether these systems can be used, but how they can be meaningfully integrated into existing structures. The transition from isolated demonstrations to productive applications requires clear strategic and technical integration.

A central aspect is integration into existing system landscapes. Spatial displays only deliver their full value when they are connected with available data sources, software solutions, and processes. Isolated applications may create visual effects, but they do not provide lasting benefit.

At the same time, infrastructure and performance requirements increase. Real-time processing, parallel perspective calculation, and continuous sensing require stable systems with low latency. Companies must therefore decide which parts of the processing should happen locally and where centralized or cloud-based systems should be added in a meaningful way.

Organizationally, a new demand also emerges. The introduction of spatial interfaces changes not only tools, but ways of working. Teams must learn to interpret information spatially, make decisions visually, and work together in new forms of interaction. Without this adaptation, the technology’s potential remains unused.

Another decisive factor is scaling. While initial systems are often deployed in pilot projects, the question of broader rollout quickly follows. Standardization, maintainability, and security therefore become central topics.

- System Integration → Connection with existing data, tools, and processes

- Infrastructure → Ensuring real-time capability and stable performance

- Organization → Adapting workflows and decision-making processes

Integration of spatial displays into existing system architectures with data sources, real-time processing, and visual interaction

Graphic: Visoric Research on the integration of spatial displays into enterprise systems

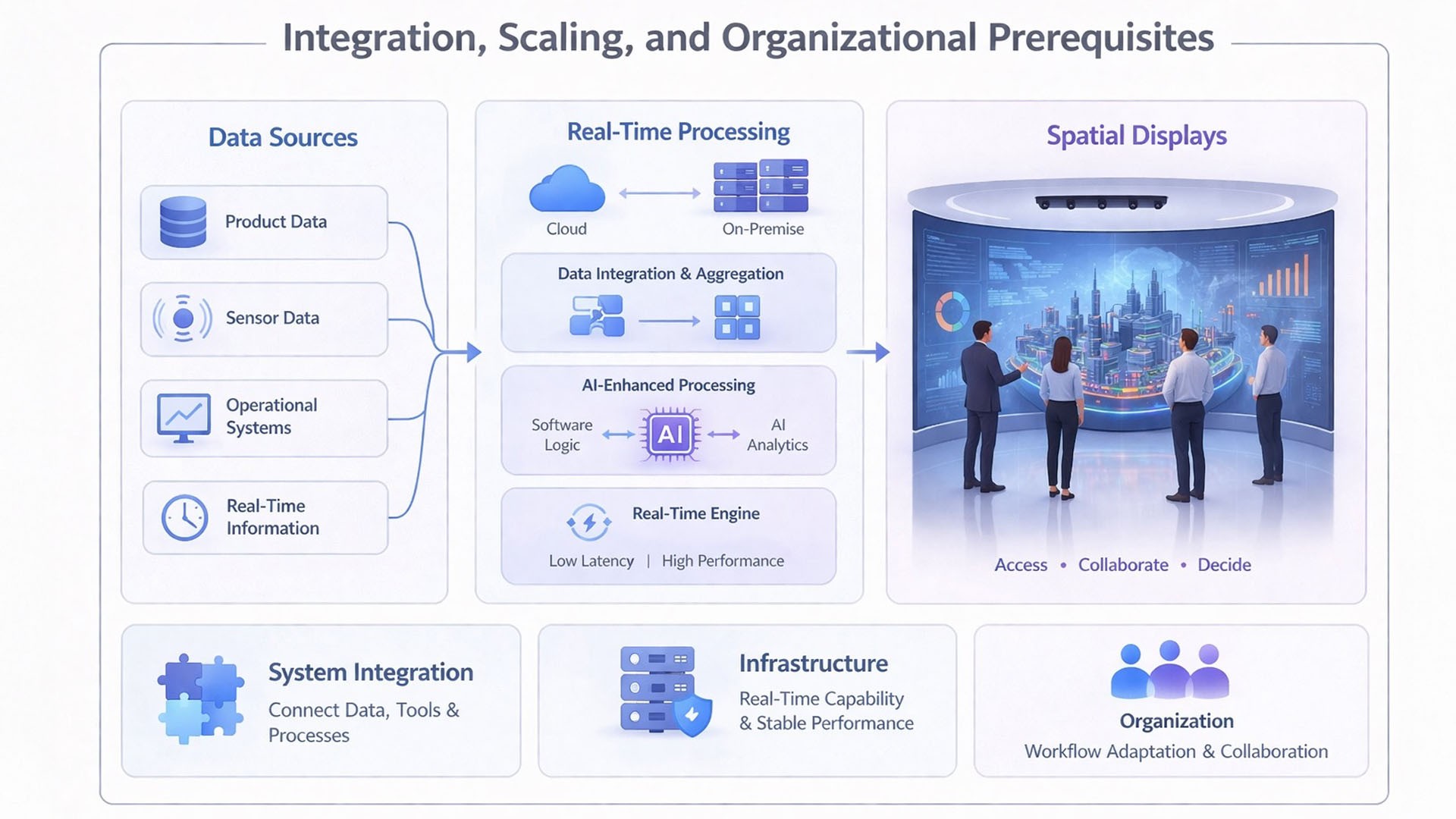

The illustration shows how spatial displays are embedded in an existing enterprise architecture. On the left side are different data sources, ranging from product data and sensor data to real-time information from operational systems.

Processing takes place in the central area. Data is aggregated, analyzed, and transformed into a form suitable for spatial visualization. The combination of classical software logic and AI-supported processing plays a decisive role here.

On the right side, the application becomes visible. Spatial displays function as the interface through which users access this data and work with it together. The difference from traditional interfaces is that information is not consumed in isolation, but interpreted in context.

It becomes especially clear that such systems cannot be understood as a single application. They are part of a higher-level architecture that connects data, logic, and visualization. This integration is exactly what determines whether a system is scalable in the long term.

This finally shifts the focus from technology to application. Spatial displays are not only a new interface, but a tool for redesigning processes, decision logic, and collaboration.

How this new form of interaction actually feels and performs in real use, however, is best not only described, but directly observed.

The following section therefore presents a real example that makes the principles described visible in application and allows the transformation from the static screen to the reactive spatial system to be experienced directly.

From Screen to Spatial Experience

The following video shows how displays are evolving from classic output surfaces into spatial systems. It makes clear that this is no longer just about visualization, but about a new form of interaction with digital content.

At the center is the ability to calculate perspectives in real time. Content responds immediately to the user’s position. Movement becomes the interface, while depth, geometry, and visualization adapt dynamically.

This creates the impression of a physical space without requiring additional devices such as headsets. The user no longer looks at content from the outside, but moves within a digital environment.

This development marks a fundamental shift. Digital content is no longer merely displayed, but made spatially experienceable. Displays become systems that actively control and adapt perception.

In combination with AI-generated or scanned 3D data, a new flexibility emerges. Content can not only be displayed, but also modified, reused, and deployed in different contexts.

This fundamentally changes the role of the display. It is no longer a passive surface, but an active interface that creates space and enables interaction.

The screen becomes the environment.

And space becomes the interface.

Reality capture and AI-supported 3D reconstruction as the foundation for new interactive forms of visualization

Credits: milestong_led_display © All rights belong to their respective owners | Analysis and contextualization: Ulrich Buckenlei

This development marks the transition from pure visualization to a system in which physical reality and digital processing are directly connected. This creates new possibilities not only to see information, but to understand and actively use it in its spatial context.

Sources and Further Context

Technological foundations and market trends

Deloitte Insights (2025) – Tech Trends: The Future of Spatial Computing

GlobalData / Verdict (2025) – Spatial Computing Predictions

YORD Studio (2025) – XR Trends for Business

Treeview Studio (2026) – XR Industry Statistics

Display technologies and spatial visualization

Samsung Newsroom (2026) – Spatial Display Announcement, ISE

KOSATEC (2024) – Overview of Modern Display Technologies

MDPI Micromachines (2024) – Directional Autostereoscopic Displays

Scientific research on 3D displays and eye tracking

Ma et al. (2025) – Glasses-free 3D Display with Deep Learning, Nature

Xu et al. (2025) – Eye-tracked Multi-user Glasses-free 3D Display, SID Symposium

Industrial applications and platforms

Apple (2025/2026) – Vision Pro Enterprise Applications

Materna (2025) – Spatial Computing in Industry

Snap Inc. / YORD (2025) – AR Try-On Consumer Study

Video and image sources

milestong_led_display – Immersive LED Display System © All rights belong to their respective owners

Own analysis and research basis

Ulrich Buckenlei (2026) – When Screens Become Spaces

Ulrich Buckenlei (2026) – Dual Viewer 3D Display Analysis

Ulrich Buckenlei (2026) – Analysis and Contextualization of Visual Systems

From Spatial Interfaces to Measurable Business Impact

Spatial displays, artificial intelligence, and real-time processing are currently transforming how companies work with information. Content is no longer just displayed, but made experienceable as an interactive, shared decision space.

The decisive question today is no longer whether these technologies are relevant, but how they can be concretely integrated into existing processes and used productively.

Many companies already have initial approaches in XR, 3D, or AI. Yet what is often missing is the connection between technology, data, and operational application. Systems remain isolated, and potential remains unused.

This is exactly where VISORIC comes in.

Spatial interfaces as decision spaces, VISORIC connects display technology, AI, and data into interactive systems

Source: VISORIC GmbH | Munich

We support companies in turning spatial technologies into real applications with purpose.

- Strategy → Clear roadmaps for spatial computing and interactive systems

- Spatial Interfaces → Development of spatial, user-dependent visualization systems

- AI Integration → Intelligent processing and interpretation of data in real time

- 3D & Data Visualization → Making complex content understandable and experienceable

- Simulation → Spatially analyzing and optimizing processes

- System Integration → Seamless integration into existing IT and data landscapes

- Prototyping → Fast validation of new interaction concepts

The difference is created in execution.

Companies that act now gain clear advantages in speed, decision quality, and collaboration.

Use the contact form to develop concrete approaches for your company.

Contact Persons:

Ulrich Buckenlei (Creative Director)

Mobile: +49 152 53532871

Email: ulrich.buckenlei@visoric.com

Nataliya Daniltseva (Project Manager)

Mobile: +49 176 72805705

Email: nataliya.daniltseva@visoric.com

Address:

VISORIC GmbH

Bayerstraße 13

D-80335 Munich