When Reality Becomes a Digital Foundation

Editorial image | Subject: Ulrich Buckenlei capturing a real environment with a smartphone for 3D reconstruction | Context: Analysis of modern reality capture methods between photogrammetry, LiDAR, and AI-based processing | Source: © Ulrich Buckenlei | Visoric GmbH

The digital representation of reality has long been a complex and technical process. High-precision 3D models were created either through elaborate laser scans or through photogrammetry, where three-dimensional structures are reconstructed from hundreds of images. Both approaches delivered usable results but were limited in effort, cost, and processing.

LiDAR systems enable direct geometric capture of environments with high accuracy. They are independent of lighting conditions and provide stable data, but quickly reach limitations with fine surface structures or certain materials. At the same time, they are often cost-intensive and less flexible in application. [9][11]

Photogrammetry follows a different approach. Here, 3D models are generated from image data, allowing visual details to be represented much more realistically. However, the drawback lies in its dependence on lighting, perspective, and image quality, as well as the relatively complex post-processing required. [10][12]

For years, this meant a clear decision. Either precision through sensors or visual quality through image processing. Both worlds remained separate.

This is exactly where a fundamental shift begins.

With new methods such as Gaussian Splatting, the role of data capture is changing. Instead of complex modeling, the focus shifts to the direct use of real image data. Reality is no longer abstracted but directly transformed into a usable digital form. [1]

The key change is not only in quality but in speed. What once required hours or days of processing can now be created in significantly less time and used immediately.

This establishes a new standard for digital content.

The Turning Point of 3D Capture

Previous methods of 3D capture clearly demonstrate their respective strengths but also their limitations. LiDAR provides precise geometry, while photogrammetry delivers visual realism. In practice, however, this often means compromises between accuracy, effort, and result quality.

This separation is increasingly dissolving.

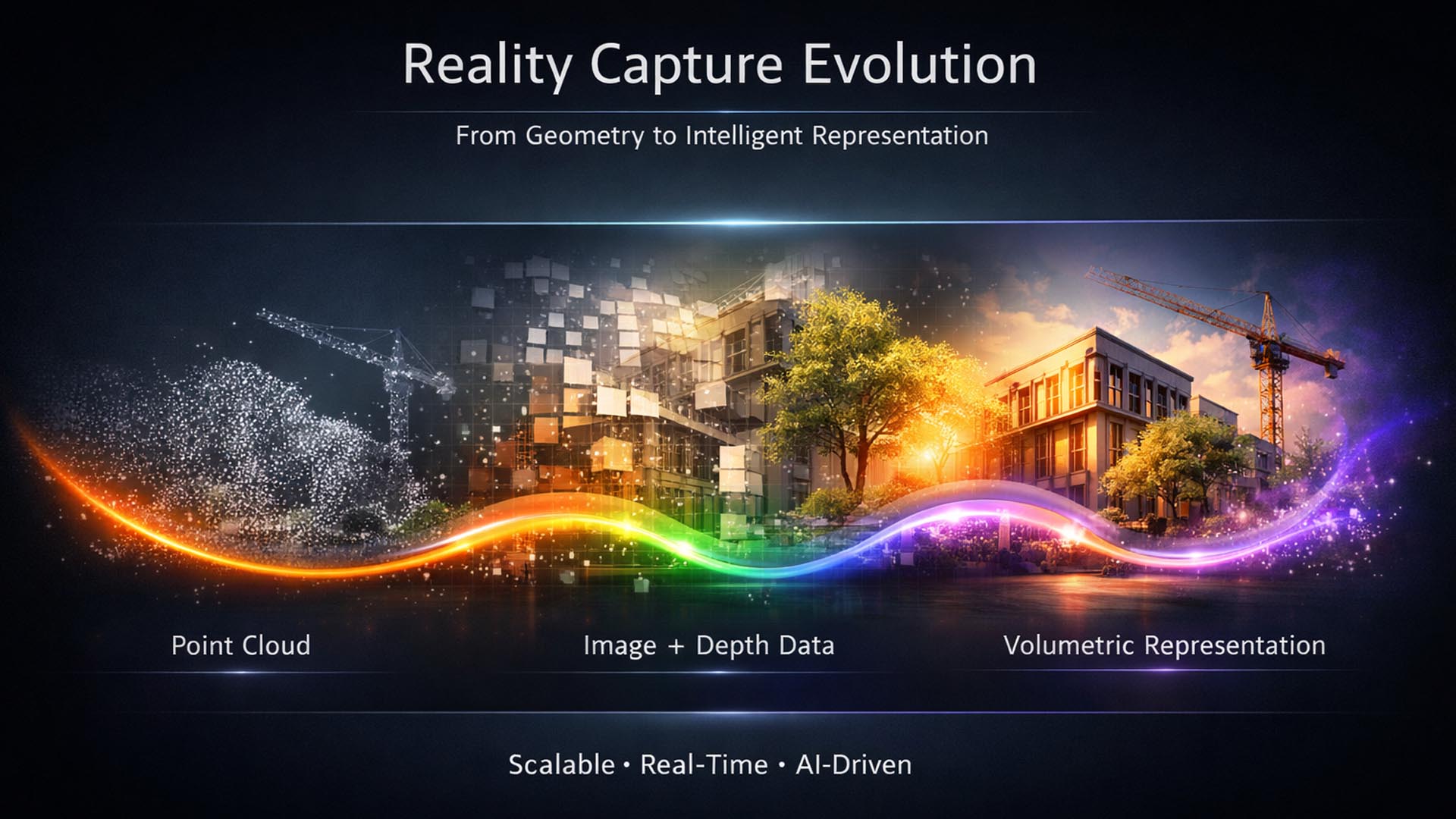

New approaches combine image data, sensor technology, and AI-driven processing into an integrated system. Instead of calculating individual points or meshes, scenes are described as volumetric information. Gaussian Splatting uses millions of small points with color and transparency data that together create a realistic representation. [1]

The difference is fundamental. Reality is no longer reconstructed but approximated and directly visualized.

- LiDAR offers high geometric precision but is cost-intensive and limited in detail depth

- Photogrammetry delivers visual quality but requires complex processing and stable capture conditions

- Gaussian Splatting combines both approaches and enables fast, realistic rendering from image data

Comparison of modern reality capture methods, from classical sensor-based systems to AI-driven rendering

Subject: Strategic visualization | Representation: Combination of point cloud, image data, and volumetric reconstruction | Visualization: © Ulrich Buckenlei | Visoric GmbH

The image illustrates this transition. While traditional methods operate either geometrically or visually, modern approaches show a convergence of both worlds. Point clouds, image information, and volumetric data interact to create a much more coherent overall representation.

Technically, this is enabled by new rendering approaches and GPU-optimized processing. Systems can interpret large datasets in real time and render them visually. This makes usage not only more efficient but also significantly more accessible. [4][5]

At the same time, requirements are changing. Instead of perfect individual models, scalability becomes the focus. Content must be created quickly, adapted, and integrated into existing systems.

This is where the true significance of this transformation lies.

3D capture evolves from a specialized process into a fundamental capability of digital systems. Reality becomes a data source rather than just a reference.

The next chapter analyzes how this data is captured in practice, the role of cameras, drones, and sensors, and why access to 3D technology is currently changing so rapidly.

How Reality Capture Becomes Scalable Today

The first chapter made it clear that the way reality is digitally represented is fundamentally changing. The second chapter focuses on the key prerequisite for this transformation: capturing reality itself.

For a long time, reality capture was a specialized field. High-quality 3D data required either industrial laser scanners or complex photogrammetry workflows with specialized software and significant time investment.

Today, this access is shifting.

New camera systems, mobile sensors, and AI-driven processing make it much easier to capture real environments. The entry barrier is decreasing, while the quality of results continues to improve.

A key driver of this development is the diversity of available capture systems.

- High-end systems such as drones with photogrammetry cameras enable large-scale and precise capture of complex environments

- 360° cameras and volumetric capture systems generate immersive datasets with high visual quality

- Mobile devices and new sensor solutions make 3D capture accessible for the first time across a wide range of applications

Modern reality capture systems, from professional drones to mobile devices in direct comparison

Subject: Strategic visualization | Representation: Drone with photogrammetry camera, 360° camera setup, and smartphone-based capture | Visualization: © Ulrich Buckenlei | Visoric GmbH

The image clearly shows this development. Different systems capture the same reality in different ways. While drones document large areas with precision, compact cameras and mobile devices enable flexible and fast data capture directly on site.

Technically, this progress is based on several parallel developments. High-resolution sensors, improved optics, and robust tracking methods such as Visual SLAM enable continuous capture of movement and space. At the same time, software solutions like COLMAP or OpenMVG ensure that consistent spatial structures are generated from individual images. [6][7]

AI is also fundamentally changing the process. Systems automatically recognize structures, optimize camera positions, and improve reconstruction without manual intervention. This makes workflows not only faster but also more robust against errors. [5][8]

Another key factor is the integration of new hardware. Devices such as 360° cameras or specialized capture systems generate coherent datasets already during recording. At the same time, developments in spatial video show that even consumer devices are increasingly capable of capturing spatial information. [1][4]

This fundamentally changes the role of data capture.

Capture is no longer seen as a preparatory step but as an integral part of digital processes. Data is created where it is needed and can be processed immediately.

The consequence is clear. 3D capture becomes scalable.

Companies are no longer dependent on creating individual models with high effort. Instead, real environments can be continuously captured, updated, and integrated into existing systems.

This development forms the foundation for the next step.

The following chapter analyzes how this data is processed technically and why Gaussian Splatting plays a central role.

How Reactive Interfaces Work Technically

When interfaces respond to movement and context, it may initially appear as a visual effect. In reality, however, it is a clearly structured technical system. The distinction is crucial. It is not about illusion but about the precise interaction of multiple components that together enable stable interaction.

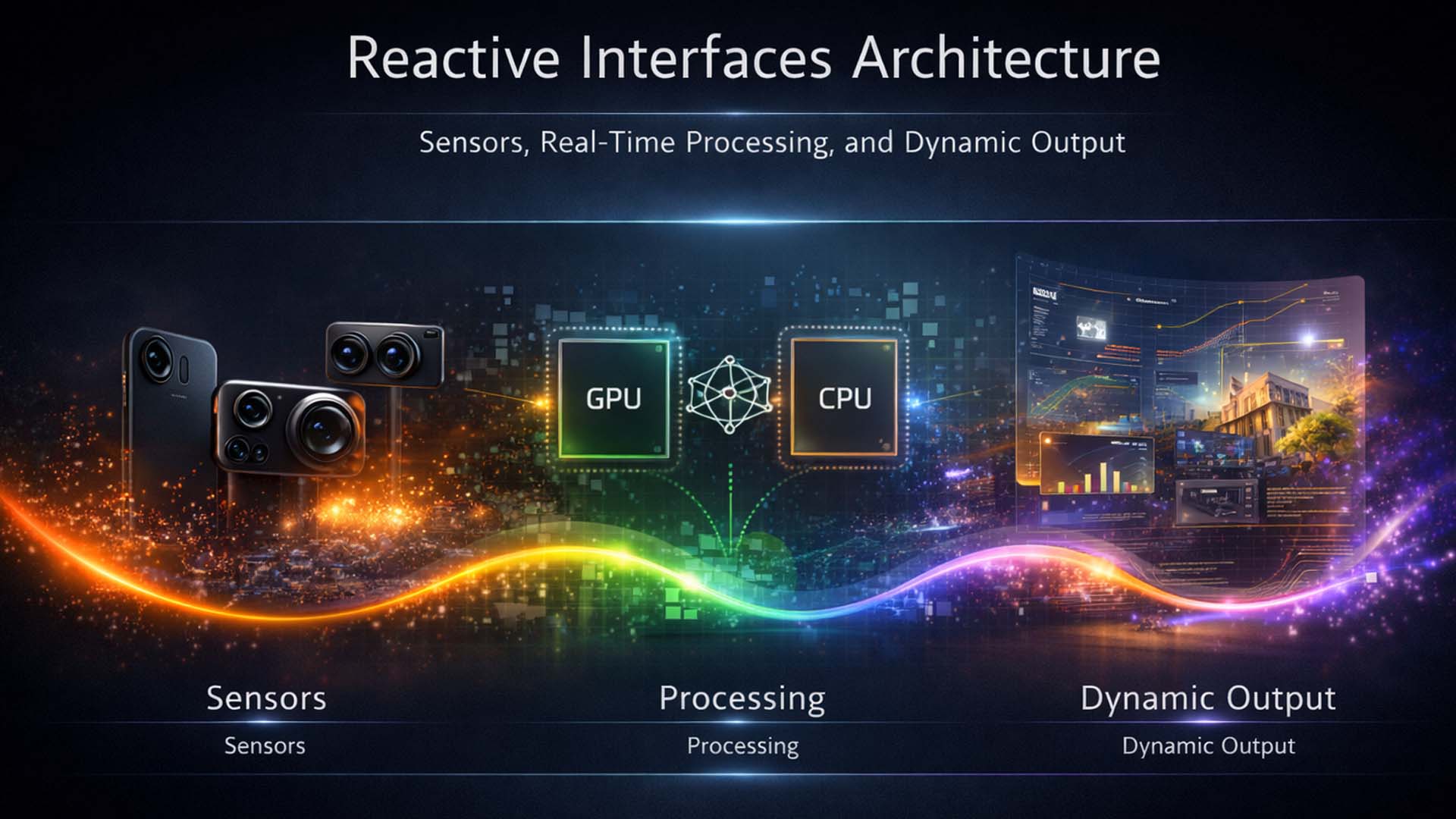

At its core, this system consists of three layers: sensing, processing, and visual output. Only when these elements work synchronously does the impression of a system reacting directly to the user emerge. [12]

Sensing acts as the input layer. Cameras or other sensors continuously capture the user’s position and movement. Without this data, the interface remains static and cannot react to context.

This is where the second layer comes into play. Processing happens in real time, often directly in the browser or locally on the device. AI models interpret the data and translate it into actionable information. Without this processing, movement would be captured but not meaningfully utilized. [16]

The visual output is created by combining these two layers. An engine such as Three.js continuously adjusts perspective, camera, and rendering, creating the impression of depth and spatial presence. This is not real physical depth but a precisely calculated visual simulation. [11]

- Sensing → captures user movement and position in real time

- Processing → interprets data locally and translates it into interaction logic

- Visual output → dynamically adapts rendering and creates spatial perception

Technical architecture of reactive interfaces, combining sensing, real-time processing, and dynamic rendering

Graphic: Editorial system visualization | Visualization: © Ulrich Buckenlei | Visoric GmbH

The graphic presents the system as a cohesive architecture. On the left is sensing, continuously capturing data. In the center, processing interprets this data and converts it into control logic. On the right, visual output dynamically adapts.

What matters is not only the technology itself but its stability. Latency, accuracy, and synchronization determine whether the system feels convincing. Even small delays can significantly affect perception. [7]

It also becomes clear that such systems do not operate in isolation. Data streams, rendering, and interaction logic must be precisely coordinated. Only through this integration does an interface become consistent and reliable.

Security and operational aspects also play a role. As soon as camera data is processed, requirements for data protection, access control, and system governance become part of the architecture. [4]

This makes it clear that reactive interfaces are not purely a frontend topic. They represent a system architecture that connects data, logic, and visualization. This distinction determines whether an interface merely impresses or truly works.

The next chapter examines in which concrete application areas these systems deliver real value.

Value and Use Cases of Reactive Interfaces

The previous chapter outlined how these systems are technically structured. Sensing, processing, and visual adaptation together create an interface that is no longer static. The key question now is in which situations this approach truly provides an advantage.

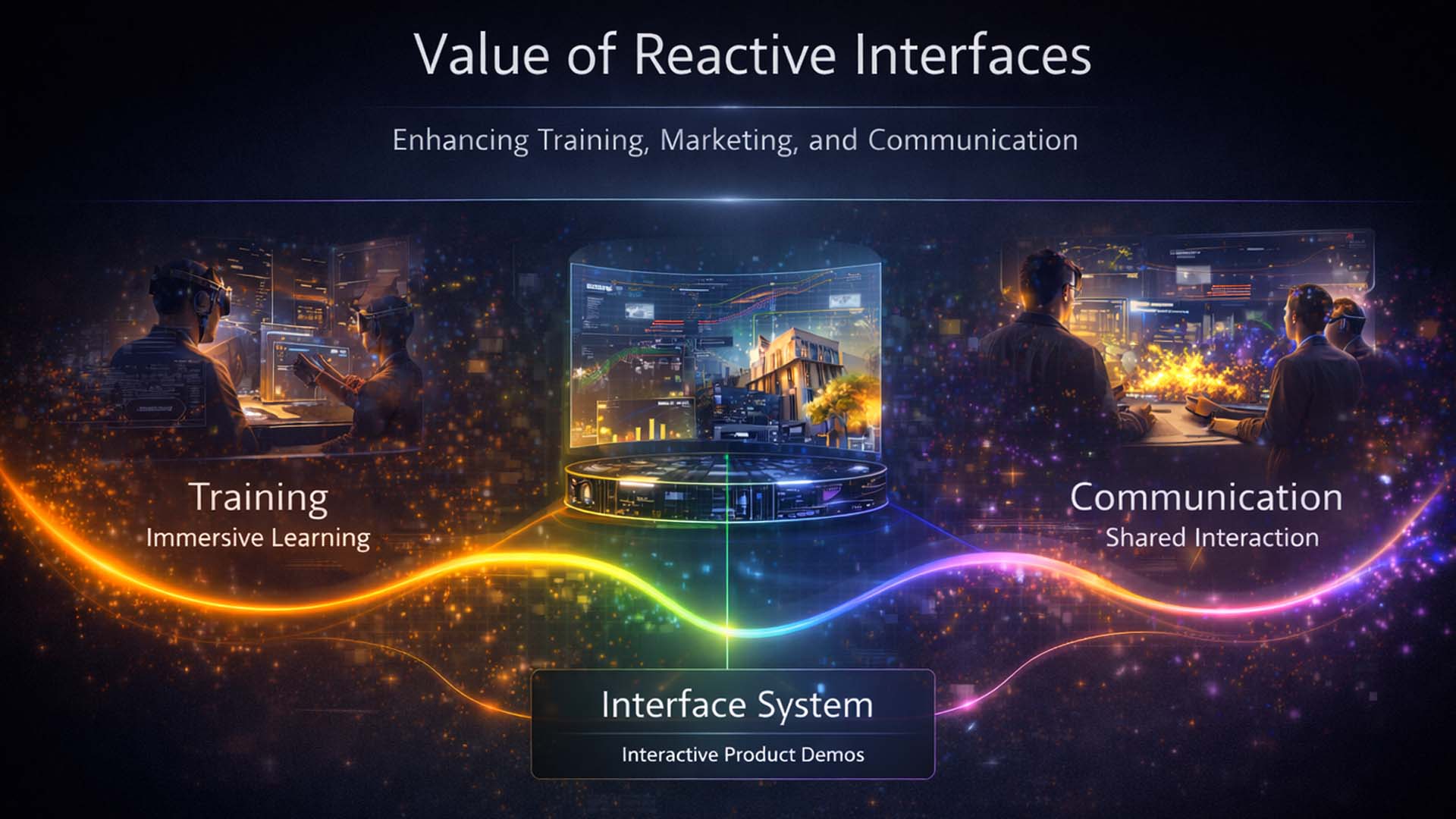

This is where practical value begins. Reactive interfaces are particularly powerful when information must not only be read but understood. As soon as movement, perspective, and context play a role, they offer clear advantages over traditional interfaces. [15]

- Training and simulation → interaction accelerates understanding of complex content

- Product presentation → systems respond to users and become more tangible

- Communication and decision-making → shared interaction improves understanding

Reactive interface use cases across training, communication, and interactive product presentation

Graphic: Editorial analysis | Visualization: © Ulrich Buckenlei | Visoric GmbH

The graphic condenses these use cases into a clear structure. At the center is the interface system, surrounded by contexts where value becomes most evident.

In training, dynamic adaptation enables faster understanding of complex topics. Users interact directly with the system rather than just consuming information. [17]

In sales and product visualization, systems respond to users, creating a stronger perception of quality and functionality.

In communication, a third effect emerges. When multiple people interact with a system, a shared reference point is created. Decisions are no longer based on individual screens but on shared interaction.

At the same time, limitations become visible. Not every application benefits from reactive interfaces. For standardized processes or precise data entry, traditional interfaces remain more efficient. [20]

Value therefore does not come from technology alone but from the right context of use. Companies that understand this distinction can apply interfaces where they truly make an impact.

The next chapter examines the requirements that arise when a demo evolves into a fully operational system.

From Prototype to Platform, Operational Requirements

After describing the technological foundations and use cases, this chapter shifts focus to an often underestimated dimension. The real challenge begins not with demonstration but with operation.

A reactive interface can be impressive as a standalone setup. But once integrated into real processes, new requirements emerge that go beyond visualization. Systems must run reliably, be securely operated, and be embedded within organizational structures.

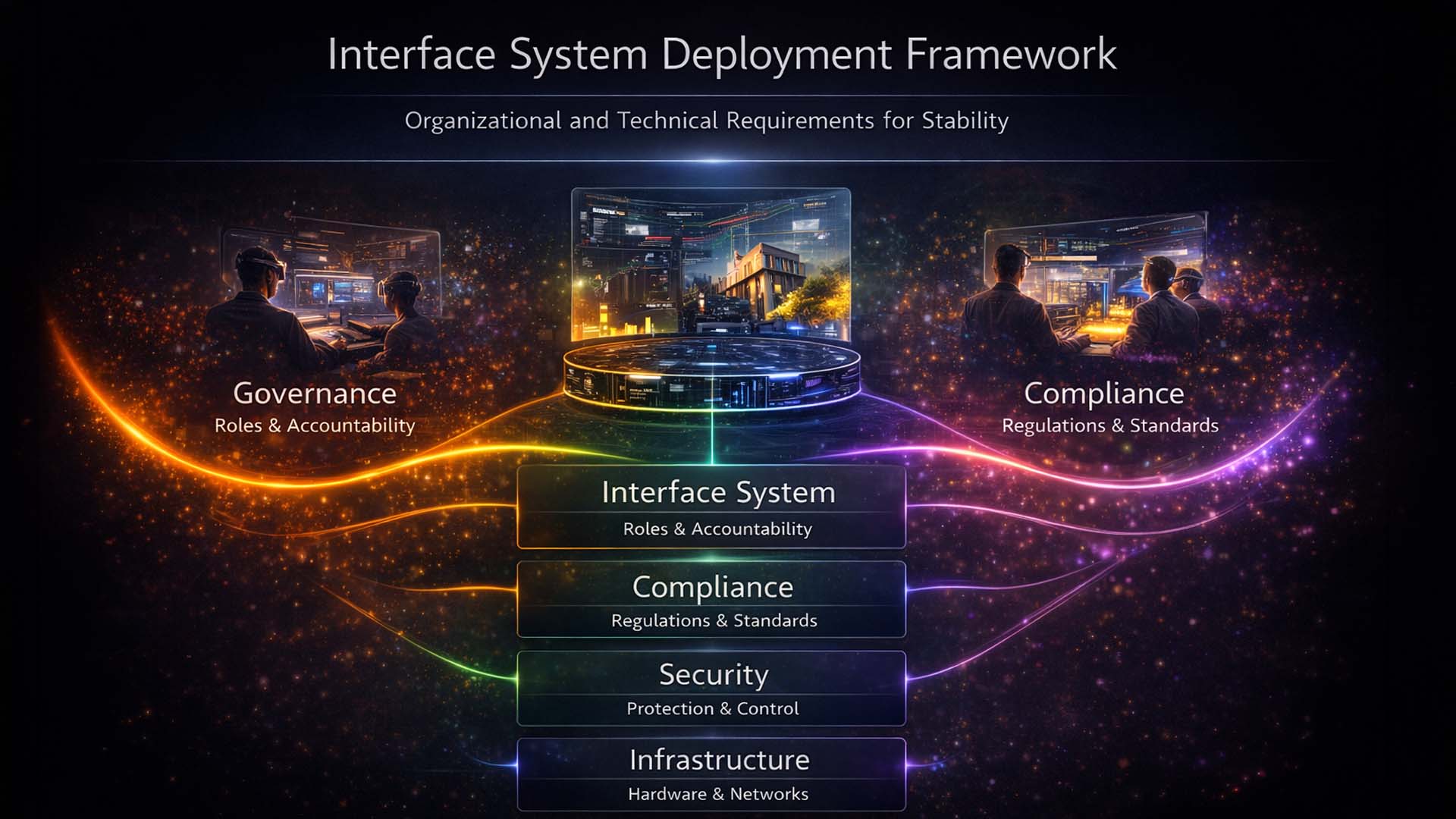

This is where the perspective changes. An interface becomes a system that must be planned, operated, and continuously optimized. Studies on technology integration show that structure and operations are critical for sustainable use. [15]

- From demo to operation → systems must function reliably over time

- From effect to structure → organization and processes become part of the solution

- From standalone application to platform → scalability becomes essential

Interface system deployment framework, organizational and technical requirements for stable operation

Graphic: Editorial framework analysis | Visualization: © Ulrich Buckenlei | Visoric GmbH

The graphic outlines the necessary layers for operating such systems.

Governance defines control. Roles, responsibilities, and decision processes must be clearly structured. [18]

Compliance covers regulatory requirements and standards, especially relevant when processing user data. [4]

Security addresses system protection, including data, access, and interfaces.

Operations describe ongoing processes such as monitoring, maintenance, and performance management.

Infrastructure forms the technical foundation, including hardware, networks, and runtime environments.

The key insight is clear. Success depends not on visualization but on the ability to operate these systems as platforms. Technology alone is not enough.

The next chapter explores the strategic implications for companies.

Gaussian Splatting as Digital Infrastructure

With the previous chapters, the technological development has been fully outlined. The final chapter shifts focus to strategic relevance. Gaussian Splatting is not just a new method of visualizing reality but is evolving into a foundational infrastructure for digital systems.

This distinction is critical.

Many technologies remain isolated solutions, addressing specific problems without becoming part of long-term value creation. Gaussian Splatting follows a different logic. It connects data capture, visualization, and interaction into a continuous system. [3][4]

This creates a new foundation for digital applications.

- Reality capture becomes a continuous data source instead of a one-time process

- Visualization becomes part of operational systems rather than just presentation

- Interaction evolves from control to direct decision support

Gaussian Splatting as a connecting layer between reality, data, and digital platforms

Subject: Strategic visualization | Representation: physical world and data capture below, Gaussian Splatting as processing layer in the middle, applications such as digital twins, XR, and simulation above | Visualization: © Ulrich Buckenlei | Visoric GmbH

The graphic shows Gaussian Splatting not as an isolated technology but as a connecting layer within a digital architecture. At the bottom is the physical world, captured through cameras, sensors, and drones. Above it, a structured data layer emerges where information is processed and made accessible for applications.

Gaussian Splatting plays a central role by translating real environments into a form that is both visually intuitive and technically efficient.

On top of this, applications are built.

Digital twins, industrial simulations, and immersive XR experiences leverage this data and make it usable. Platforms such as NVIDIA Omniverse already demonstrate how such systems are applied in real production environments. [2]

At a strategic level, this development is becoming increasingly visible.

Analyses by McKinsey, Gartner, and the World Economic Forum show that digital systems are evolving toward spatial, context-based interaction. Data is no longer only analyzed but made directly experiential. [3][5]

This also changes the role of companies.

Those who adopt these technologies early gain not only a visual advantage but a new way of using data. Processes become more transparent, decisions faster, and systems more flexible.

At the same time, a clear trend emerges.

Reality capture is becoming the foundation of many digital business models. Gaussian Splatting acts as the bridge between the physical and digital world.

The key insight is clear.

Gaussian Splatting is not a feature.

It is infrastructure.

This completes the narrative of this article. From capture to processing to strategic perspective, a coherent picture emerges of a technology that is not only transforming visualization but fundamentally changing how digital systems are built and used.

From Capture to Experience

The following video provides a concise overview of how reality capture, new 3D representations, and AI-driven processing work together today. At its core is Gaussian Splatting as a new approach to not only capture real scenes but to make them more flexible, performant, and visually compelling. [22]

Rather than focusing on a single workflow, the video highlights the breadth of the field. It combines camera-based capture, volumetric reconstruction, and new presentation formats into a comprehensive view that effectively reflects the current momentum of this technology. [22][23]

At the same time, it shows why this topic is gaining relevance. Reality capture is evolving from a specialized process into a scalable foundation for visualization, simulation, and new digital applications. This also highlights the role of new hardware and capture systems that can generate spatial data faster and more accessibly. [22][24]

The video illustrates a central transformation. Reality is no longer just documented but becomes a directly usable data foundation for modern 3D systems.

Reality Capture and Gaussian Splatting, the video condenses current developments from real-world capture to high-performance 3D rendering

Video source: Corridor Crew, “THIS is the Biggest Thing Since CGI” | Additional references: 4DV.ai and Antigravity A1 360 Drone | Analysis: Ulrich Buckenlei

This development clearly shows the direction of digital systems. Reality becomes a direct data foundation that can be seamlessly transformed into new visual and interactive applications.

Sources and References

- Kerbl et al., 3D Gaussian Splatting, SIGGRAPH, 2023. [1]

- Peng et al., RTG-SLAM with Gaussian Splatting, SIGGRAPH, 2024. [2]

- Wei et al., 4D Gaussian Splatting, SIGGRAPH, 2024. [3]

- NVIDIA, Real-Time Gaussian Splatting, 2025. [4]

- NVIDIA, AI & RTX for Reality Capture, 2024. [5]

- Khronos Group, Gaussian Splatting Extension (glTF), 2026. [6]

- Bentley Systems, Gaussian Splatting & Digital Twins, 2026. [7]

- FOV Ventures, Gaussian Splatting as a data layer, 2023. [8]

- Wingtra, LiDAR vs Photogrammetry, 2024–2026. [9]

- Anvil Labs, Drone comparison LiDAR vs Photogrammetry, 2026. [10]

- DJI, LiDAR systems L1–L3, 2025. [11]

- DJI, Photogrammetry camera P1, 2023. [12]

- Insta360, Titan 11K VR camera, 2024–2026. [13]

- XGRIDS, Lixel Portal 3D Capture, 2025. [14]

- COLMAP / OpenMVG, Photogrammetry pipelines, 2024–2026. [15]

- Apple, Spatial Video & Computing, 2024–2026. [16]

- Meta Reality Labs, Spatial Capture & Interaction, 2024–2026. [17]

- Microsoft Research, Mixed Reality & Computer Vision, 2024–2026. [18]

- McKinsey, Technology Trends Outlook, 2025. [19]

- Gartner, Emerging Technologies & Spatial Computing, 2025–2026. [20]

- World Economic Forum, AI & immersive technologies, 2024–2025. [21]

- Corridor Crew, “THIS is the Biggest Thing Since CGI”, YouTube, published March 29, 2026. [22]

- 4DV.ai, Volumetric Capture and Reconstruction, referenced in the video and description, accessed 2026. [23]

- Antigravity, A1 360 Drone, referenced in the video description as capture hardware, accessed 2026. [24]

From Reality Capture to Real Business Impact

Gaussian Splatting, AI, and spatial computing are currently transforming how companies use reality digitally. Data is no longer just captured but becomes directly experiential and usable in real time.

The key question today is no longer whether these technologies are relevant but how quickly they can be applied effectively.

Many companies already have initial approaches. However, the path to real application is often missing. Data remains unused, and prototypes do not reach operational deployment.

This is exactly where VISORIC comes in.

From idea to scalable solution, VISORIC connects technology, data, and application

Source: VISORIC GmbH | Munich

We support companies in integrating new technologies into real processes in a targeted way.

- Strategy → Clear roadmap instead of isolated ideas

- Reality Capture → Real world as a digital data foundation

- AI Systems → Making data intelligently usable

- Interactive Applications → Making content experiential

- Simulation → Understanding and optimizing processes

- Integration → Seamless integration into existing systems

- Prototyping → Test quickly and make informed decisions

The difference lies in execution.

Companies that start now gain clear advantages in efficiency, speed, and decision quality.

Learn more about our Gaussian Splatting solutions:

www.visoric.com/gaussian-splatting

Or use the contact form to develop concrete approaches for your business.

Contact Persons:

Ulrich Buckenlei (Creative Director)

Mobile: +49 152 53532871

Email: ulrich.buckenlei@visoric.com

Nataliya Daniltseva (Project Manager)

Mobile: +49 176 72805705

Email: nataliya.daniltseva@visoric.com

Address:

VISORIC GmbH

Bayerstraße 13

D-80335 Munich