Programmable Cinema, real capture versus model-generated scene

Visualization: © Ulrich Buckenlei | Visoric GmbH | Editorial concept image illustrating the shift from camera-based production to model-based generation | The juxtaposition serves analytical classification and does not claim technical completeness

In recent months, a provocative claim has circulated in media discussions: a visually elaborate film scene that would traditionally cost several million dollars could be generated with artificial intelligence for just a few cents. The figures sound spectacular. They are shared, debated, and often cited as proof that AI will fundamentally transform the film industry.[16]

Regardless of whether individual cost comparisons are precisely accurate, this debate points to a deeper structural question. If certain scenes no longer necessarily require physical infrastructure, shooting locations, specialized equipment, and extensive logistics, but can instead emerge from models, parameters, and computational power, does the production logic itself begin to shift?[1]

Programmable Cinema describes exactly this shift. It is not primarily about sensational numbers, but about the relocation of bottlenecks. In traditional productions, the bottleneck lies in capital intensity, coordination, and physical infrastructure. In model-based generation, dominant cost and competency factors move toward model quality, datasets, iteration speed, and creative control.[5]

Costs do not disappear. They change their structure. While physical resources may lose relative importance, algorithmic precision, compute power, and the ability to iterate rapidly gain weight. Competition then arises less from sheer budget size and more from the capability to formulate models coherently and systematically evolve visual worlds.[9]

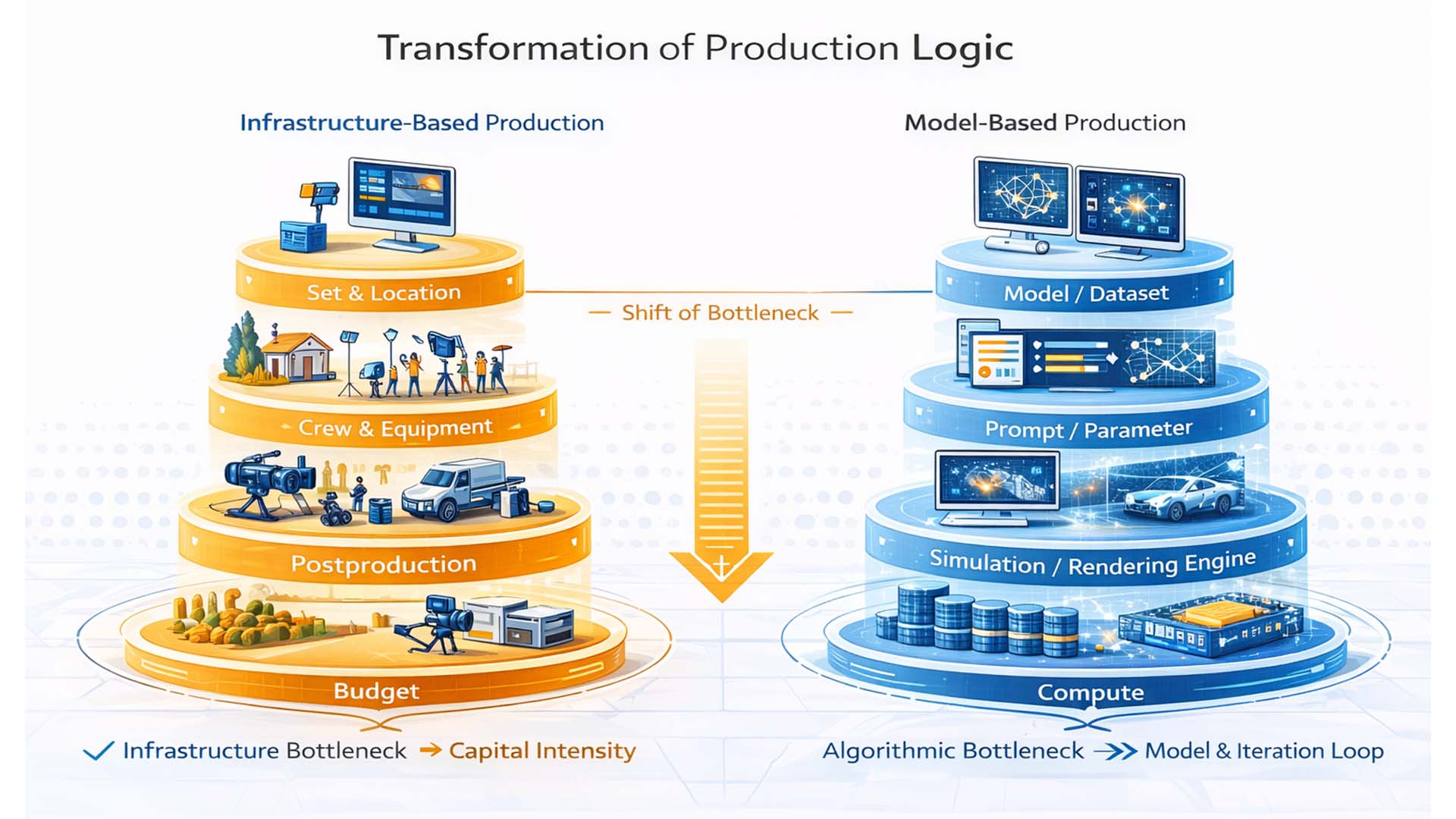

From Set to Model Architecture – How the Production Bottleneck Shifts

Traditional film production is organized around infrastructure. It requires real locations, technical equipment, specialized teams, transport, insurance, and post-production. The cost structure is largely determined by physical factors. The more spectacular the scene, the higher the resource input tends to be.[10]

This model is not inefficient, but capital intensive. The bottleneck lies in access to infrastructure. Large productions can create elaborate visuals because they command budget, logistics, and organization. Smaller actors quickly encounter structural limits.

With the introduction of generative models, this structure changes. Visual complexity increasingly emerges from model and dataset quality, precise prompt and parameter control, simulation and rendering architecture, and available compute power.[7]

The bottleneck thus shifts from physical infrastructure to algorithmic architecture. No longer does the largest set decide, but the quality of the model and the ability to iterate rapidly.

Transformation of production logic. Infrastructure-based production versus model-based generation

Visualization: © Ulrich Buckenlei | Visoric GmbH | Editorial concept graphic illustrating the shift from capital-intensive set production to model-driven, compute-based generation. The depiction serves analytical classification and does not claim technical completeness.

The graphic condenses this shift. While set, crew, post-production, and budget appear as dominant cost drivers on the left, the focus on the right moves to model, parameters, simulation, and compute. The infrastructural bottleneck is replaced by an algorithmic one.

This does not mean that real production disappears. Rather, a hybrid landscape emerges in which physical shoots and model-based generation coexist. The decisive factor becomes which production logic is economically more meaningful under specific conditions.[15]

As soon as spectacular images no longer necessarily require physical infrastructure, the competitive logic changes. It is not the highest budget that guarantees visual dominance, but the ability to coherently control models, precisely articulate creative vision, and implement iterations quickly.

The next chapter analyzes under which conditions this shift leads to a sustainable economic transformation.

Economics of Model Architecture. When AI Production Is Truly More Cost-Effective

In the previous section, the structural shift of the production bottleneck was described. Now the crucial question arises: under what conditions is model-based generation economically superior?

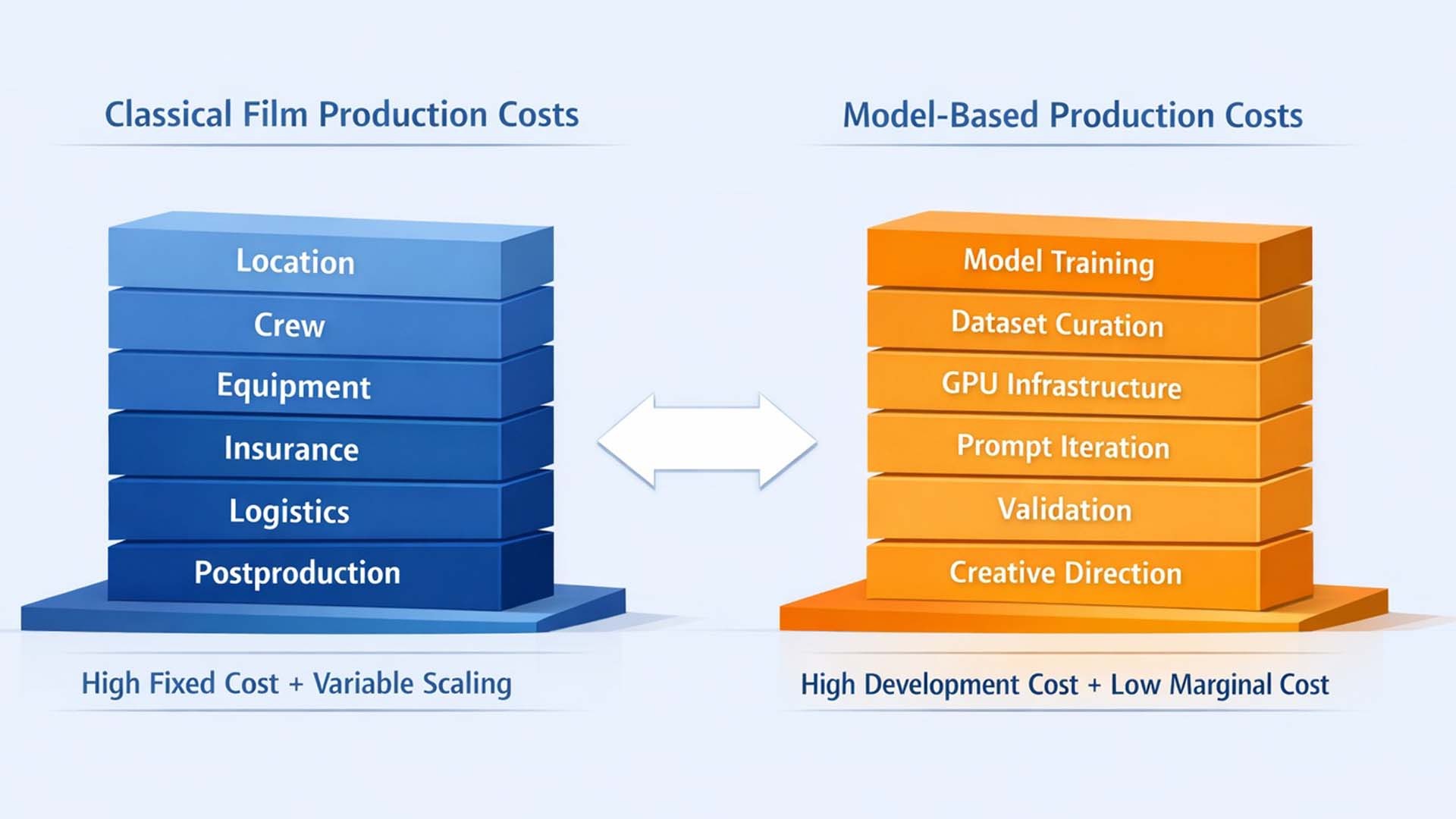

Public debate often focuses on extreme figures. Yet economically relevant are not isolated token costs, but complete production systems. AI-based generation also requires infrastructure: training data, model access, compute power, iterations, quality control, and creative supervision.[16]

The real transformation therefore lies not in absolute cost elimination, but in the variability of the cost structure. Traditional film production incurs high fixed costs before a single scene is created. Model-based production shifts part of these fixed costs into scalable computational processes. This means that the more a scene is iterated, varied, or scaled, the greater the potential economic advantage.[1]

- Scaling reduces marginal costs, but increases requirements for model quality

- Iteration becomes the core mechanism, not a downstream correction

- Creative control shifts into prompting, parameter steering, and reference logic

- Quality assurance becomes the new “set discipline” in model-based pipelines

Economic structural shift. Fixed-cost infrastructure versus scalable model-based generation

Visualization: © Ulrich Buckenlei | Visoric GmbH | Editorial concept graphic illustrating the shift in film production cost structures. Schematic depiction of the transition from physical infrastructure to model-based iteration and compute. The graphic serves analytical classification and does not claim technical precision.

The graphic makes visible why catchy “cent” comparisons often fall short. In traditional productions, costs arise along a physical chain of set, crew, equipment, and post-production. In model-based pipelines, the focus moves into a different stack: model and dataset, prompt and parameter control, simulation and rendering, as well as compute. Costs are not eliminated, but redistributed.

The decisive factor is the underlying business logic. Model-based production can be superior where variants, adjustments, and rapid iterations are standard. Where a one-time execution with a clearly defined shoot suffices, traditional production may remain efficient.

The next section analyzes how this cost migration affects competition, value creation, and the role of IP, distribution, and creative originality.

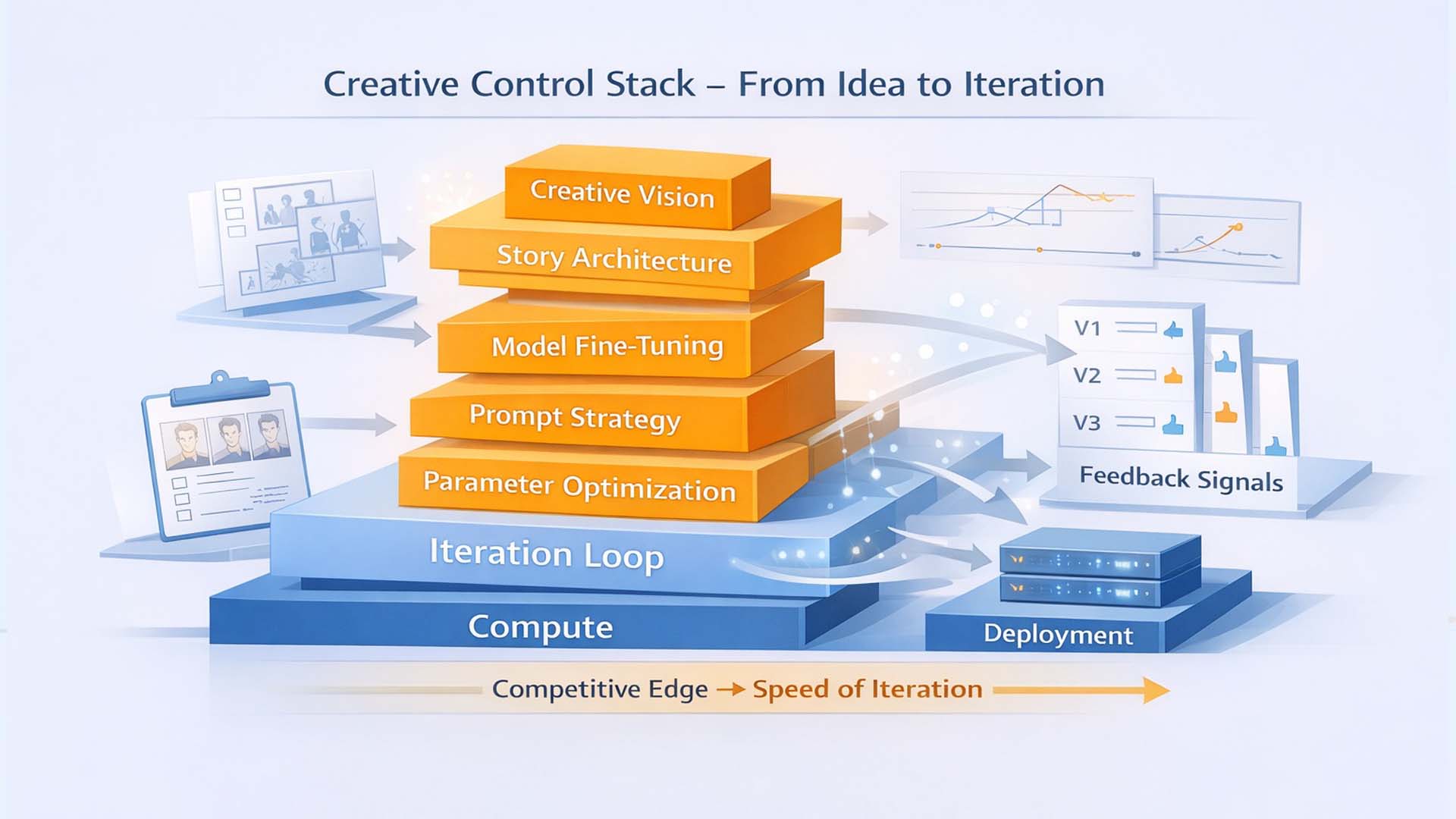

Creative Control Stack, From Idea to Iteration

The discussion about drastically falling production costs often obscures where the actual shift occurs. Not every image automatically becomes inexpensive simply because a model can generate it. What matters is how well a production organizes the transition from creative intent to reproducible control. In a model-based pipeline, film production becomes a control problem in which vision, dramaturgy, and visual consistency are managed as iterable parameters. [2][4]

The following concept graphic condenses this logic into a stack. At the bottom lie compute and an iteration loop, above them the layers in which creative decisions are translated into machine-readable control. On the right, feedback signals appear as a systematic return channel that integrates variant comparison, quality measurement, and approval into the process. The message is clear. Competition arises where iterations become faster, more targeted, and more consistent. [3][6]

Creative control stack, how vision becomes iterable control

Visualization: © Ulrich Buckenlei | Visoric GmbH | Editorial concept graphic illustrating the control logic of model-based film production, from creative intent through prompt and parameters to the iteration loop. No claim to technical precision

The upper layers represent creative intentionality. Creative Vision and Story Architecture define what a scene should mean, which perspective it creates, and which emotional logic it carries. Only when this level is clearly articulated does technical optimization below make sense. Without clear dramaturgical target images, iteration may be fast, but directionless. [5][7]

Beneath lie the translation layers. Prompt Strategy describes how vision is transferred into repeatable instructions and constraints. Parameter Optimization stands for controlled variation, such as camera, lighting, movement, timing, stylistic rules, or consistency anchors across multiple shots. Model Fine Tuning symbolizes the point at which a generic model is transformed into a robust production language through data, references, or targeted adaptation. Here arises the capability not merely to generate individual images, but to construct a coherent sequence world. [6][8]

The base of the stack is the Iteration Loop. It connects compute, versioning, and evaluation into a production routine. Iteration in this context does not mean trial and error, but a structured approach. Hypothesis, variant, evaluation, decision, next variant. That is why Feedback Signals on the right are central. They stand for tests, approvals, consistency checks, quality metrics, and human judgments that assess variants not only technically, but narratively. Deployment marks the transition from the laboratory of iteration into a distributable pipeline, for marketing, series production, previsualization, or final sequences. [2][9]

From this logic, three practical consequences can be derived that are more relevant for companies than catchy price comparisons.

- Creative controllability → Vision is operationalized as a repeatable set of rules and decisions

- Iterability → Variants emerge along defined target metrics and comparison criteria, not randomly

- Feedback channel → Feedback signals make quality measurable and prevent speed from destroying coherence

This also clarifies why the bottleneck in many projects lies not in generation, but in control. Anyone seeking consistent visual worlds, serial storytelling, and brand-conform aesthetics needs a stack that translates creativity into stable production logic. It is precisely there that it is decided whether model-based production remains an experiment or becomes a scalable method. [3][6]

The following section therefore clarifies which limits this control logic still has today, where real production remains superior, and which governance issues arise once models become a productive part of the pipeline. [10][11]

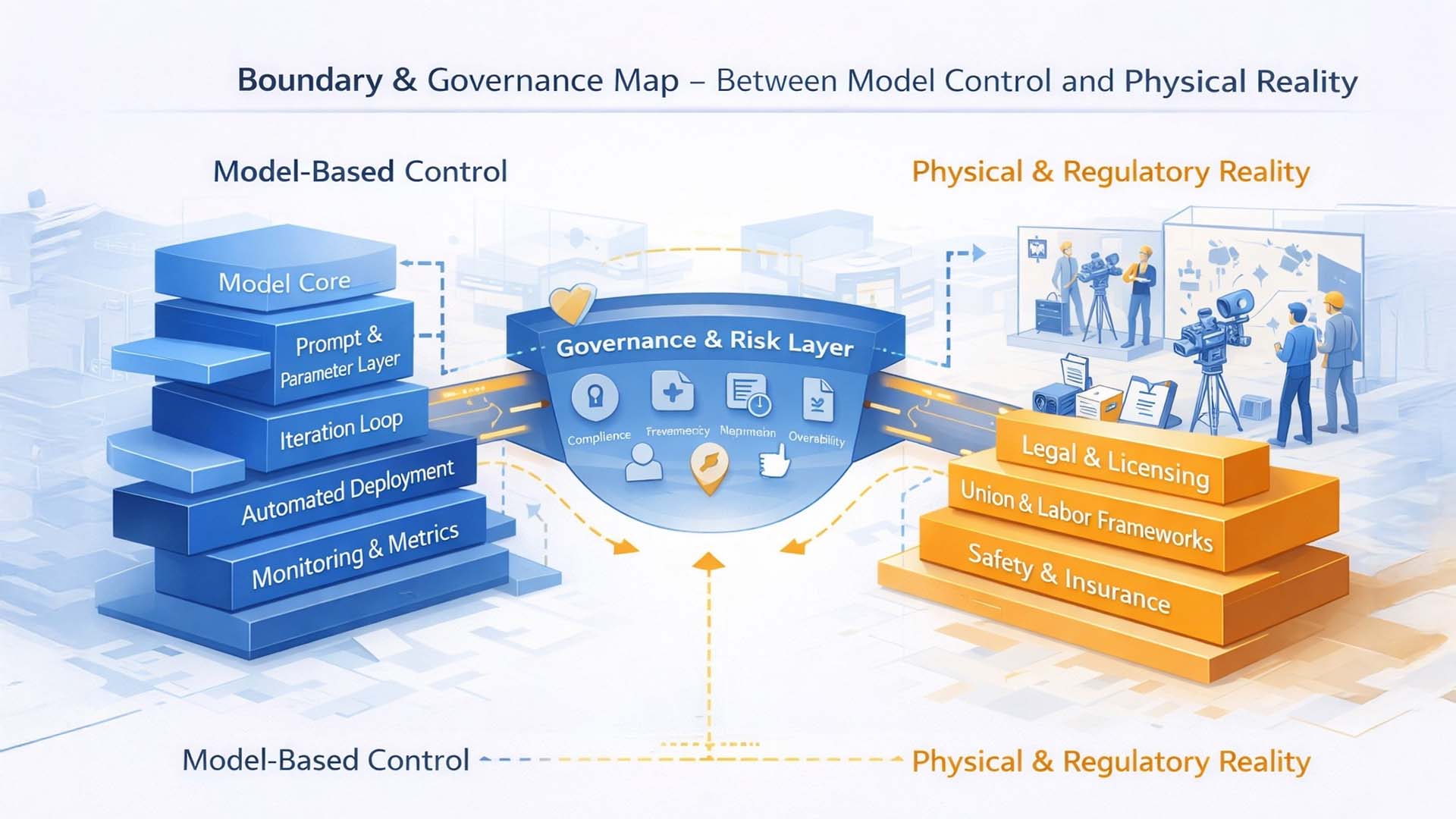

Limits, Real Superiority, and Governance Questions of Model-Based Production

The shift from physical infrastructure to model-based control logic does not mean that real production becomes obsolete. It does, however, change the conditions under which audiovisual content is created. For this very reason, it is necessary to clearly define the current limits of this architecture.

Model-based generation today primarily encounters systemic limits where physical interaction, authentic materiality, or complex social dynamics are decisive. Real stunts, documentary situations, or highly improvisation-driven scenes continue to benefit from physical presence and immediate environmental interaction. AI can simulate these contexts, but not fully substitute them.[4]

A second boundary lies in data dependency. Generative systems are only as robust as their training data. Biased, incomplete, or legally problematic datasets can lead to aesthetic distortions, ethical conflicts, or copyright risks. Responsibility therefore does not disappear from humans, but shifts into new competence fields of data curation and model supervision.[5][12]

Once models become a productive part of the pipeline, governance questions arise that extend beyond pure technology. Who is liable for generated content? How is transparency about training data ensured? What role do auditability and logging play? These questions are not hypothetical, but already part of regulatory debates at the European level.[12][13]

Limits and governance of model-based film production

Visualization: © Ulrich Buckenlei | Visoric GmbH | Editorial concept graphic illustrating technical, creative, and regulatory tensions in AI-supported media production. The graphic serves analytical classification and does not claim technical completeness.

The graphic intentionally presents this tension as a triangle between creative control, regulatory oversight, and technical limitation. At the center is not the model itself, but the question of responsibility architecture. While creative control logic becomes increasingly powerful, demands for transparency, safety mechanisms, and traceability grow simultaneously.

The decisive distinction lies between technical possibility and institutional legitimacy. Just because a scene can be generated does not automatically mean it is legally, ethically, or commercially viable. In safety-critical or highly regulated industries in particular, new review obligations emerge, comparable to other high-risk AI applications.[12]

Model-based production is therefore not a free pass for limitless automation. It requires new professional roles: model owners, data curators, prompt architects, and governance managers. Competitiveness arises not solely from creative speed, but from responsible system integration.

The following section analyzes which strategic positionings emerge from this development and which actors structurally benefit.

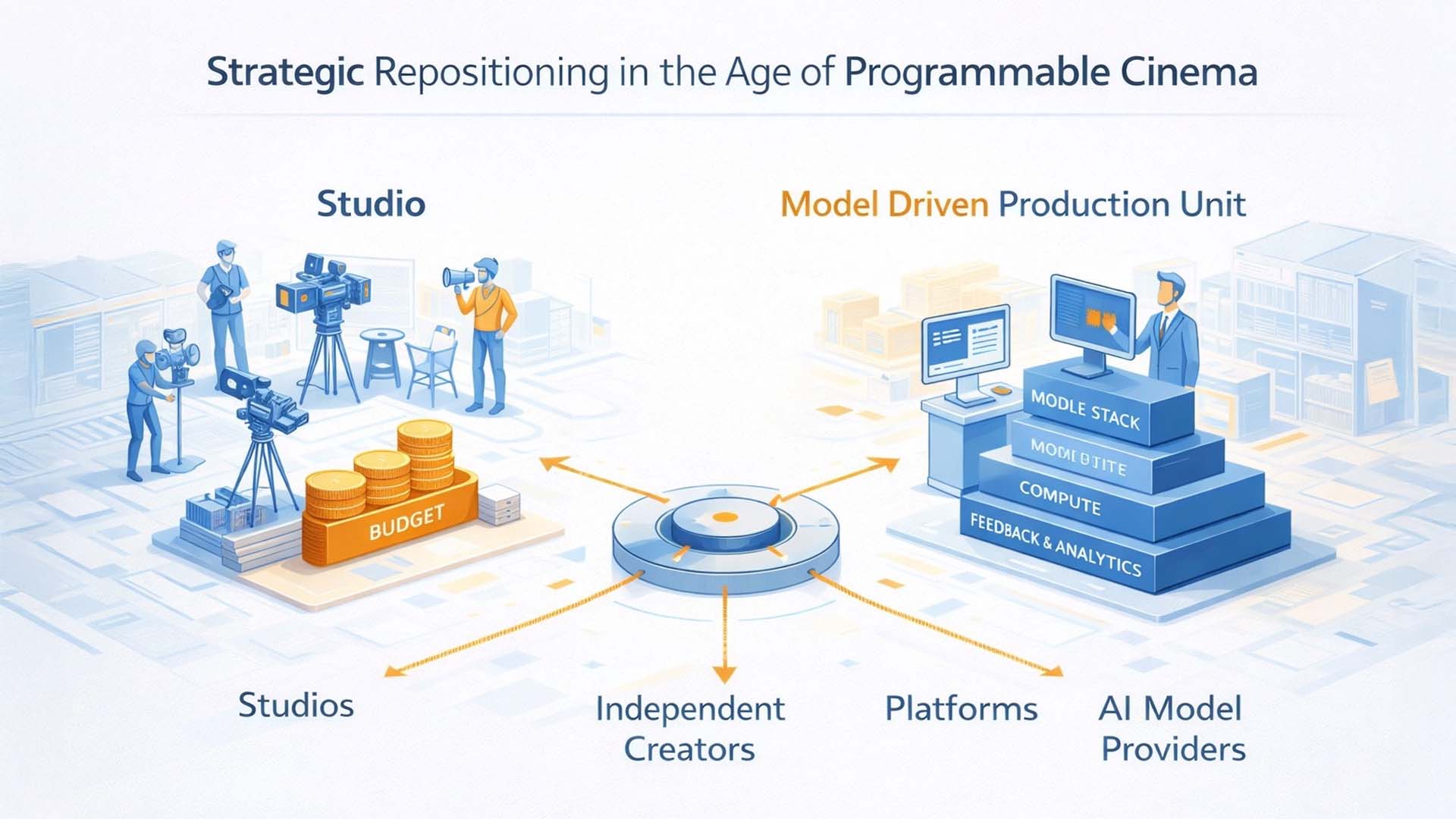

Strategic Repositioning in the Age of Programmable Cinema

If the production bottleneck shifts from physical infrastructure to model architecture, not only technology changes, but the strategic landscape of the industry. The question is no longer primarily who has access to studio space, equipment, or capital. What becomes decisive is who masters models, accelerates iterations, and systematically builds creative control logic.[1][3]

In traditional production structures, market power was closely linked to capital intensity. Studios dominated because they could bundle infrastructure, finance risk, and control distribution. Model-based production systems partially decouple this relationship. Value creation shifts toward data-driven model expertise, creative orchestration, and platform integration.[4]

New strategic actor groups emerge:

- Studios → Transformation from infrastructure operators to model and IP orchestrators

- Independent creators → Scaling through model-based production pipelines

- Platforms → Control over distribution, feedback data, and iteration speed

- Model providers → New power centers through foundation models and compute infrastructure

The strategic shift therefore affects not only production costs, but the structure of competition. Those with access to high-quality models, curated datasets, and powerful compute infrastructure can accelerate creative processes and validate experimental formats more quickly. Speed becomes an independent competitive dimension.[10]

Strategic repositioning in the model age of film production

Visualization: © Ulrich Buckenlei | Visoric GmbH | Editorial concept graphic illustrating the strategic shift from infrastructure-based film production to model-driven value creation. The depiction serves analytical classification and does not claim technical completeness.

The graphic clarifies this reconfiguration. On the left stands the classic linear value chain. On the right emerges a network model in which creative control, foundation models, platform distribution, and audience interaction are dynamically interconnected. Value no longer arises exclusively along a linear production chain, but within iterative feedback loops.

Particularly relevant is the role of feedback signals. Platform data, usage metrics, and interaction patterns flow directly back into model optimization. Production thereby becomes not only more cost-efficient, but more adaptive. Content can be further developed based on data before being fully rolled out.

At the same time, new dependencies arise. Those who control models potentially control creative parameters. Those who control compute control scalability. These concentration risks are structurally comparable to other AI-driven industries and raise long-term competition and regulatory questions.[12][13]

The strategic conclusion is therefore clear: success will not necessarily belong to the largest capital provider, but to the actor who coherently integrates creative vision, model architecture, and governance. Programmable Cinema is less a technological gimmick and more a reorganization of power, speed, and creative control.

The final chapter examines whether this shift leads to a sustainable economic transformation or whether hybrid production forms will dominate in the long term.

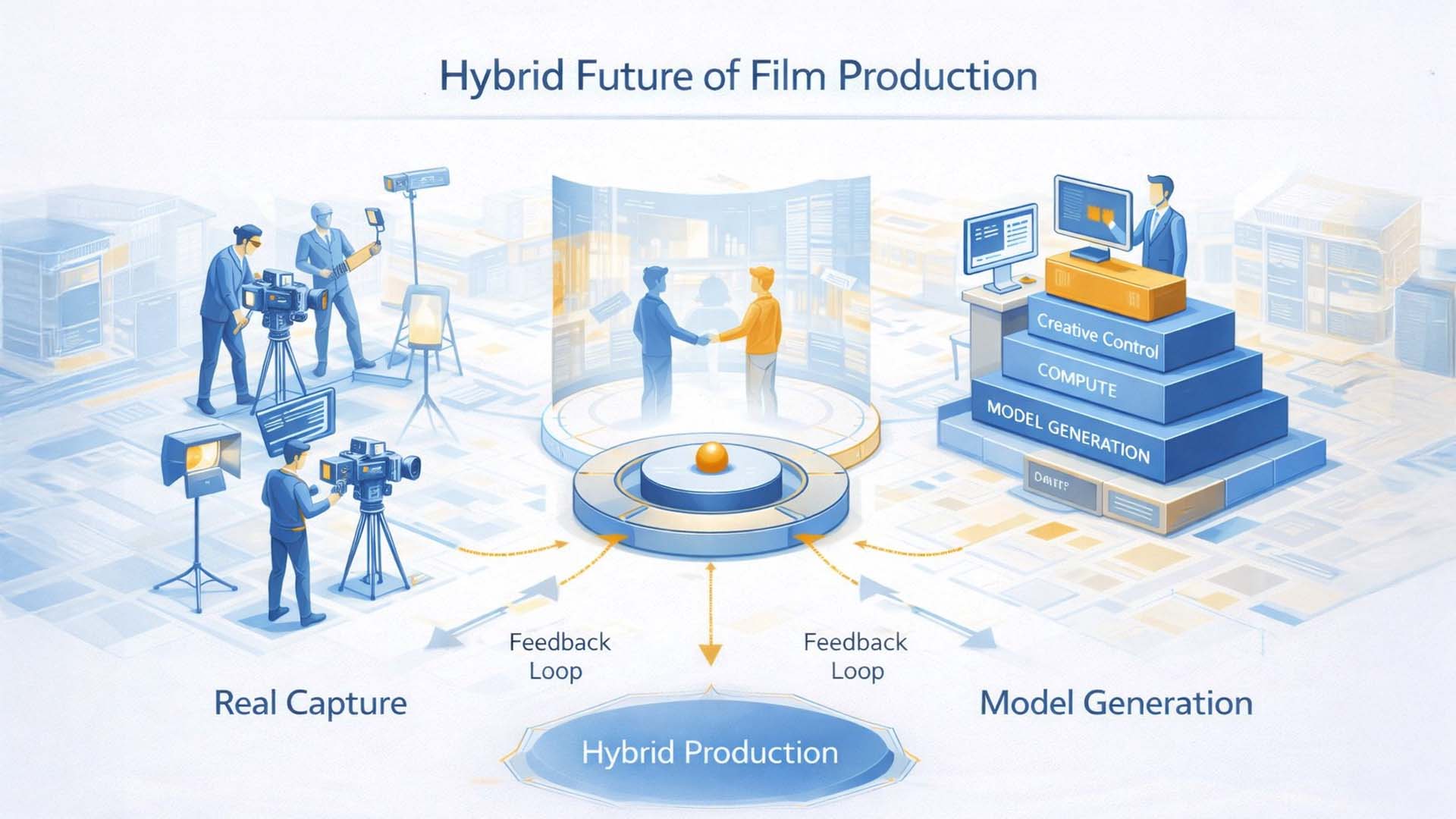

Hybrid Production Architectures and the New Interplay of Set and Simulation

The debate about model-based generation is often framed as either or. Either traditional film production with real sets, crew, and camera. Or fully synthetic visual worlds from models and compute. In practice, however, a third space is emerging, the hybrid production architecture, in which both worlds are deliberately interconnected.[7]

This configuration is precisely what the graphic visualizes. On the left, a real set is suggested, with camera, lighting, physical performance, and tangible environment. On the right stands model-based generation, with simulation, generative models, and rendering. In the center lies a connecting layer, an integration and control layer that merges real captures with synthetic extensions, variations, and iterations.[11]

Hybrid production architecture, integration of real set, simulation, model control, and distribution

Visualization: © Ulrich Buckenlei | Visoric GmbH | Editorial concept graphic illustrating the fusion of physical film production with model-based generation and compute infrastructure. The depiction serves analytical classification and does not claim technical precision.

The graphic makes visible three layers that collaborate and reinforce one another within hybrid pipelines.[7]

- Physical layer, real set, camera, lighting, human performance

- Model and simulation layer, generative models, rendering, scene extension

- Integration and control layer, data pipelines, iteration logic, distribution

The real set does not disappear. It becomes an input node within an expanded system. Acting, physical interaction, and authentic dynamics can be captured and subsequently extended, condensed, or transformed into variants through model-based processes. Conversely, fully synthetic sequences can be generated and deliberately merged with real material when stylistic continuity and coherent visual logic are precisely controlled.[8]

Economically, this hybrid logic is plausible because it does not eliminate risks and costs wholesale, but redistributes them. Real production remains efficient where unique scenes, authenticity, and physical complexity create added value. Model-based extension becomes powerful where variation, speed of adaptation, and scalability are decisive. In many productions, the advantage therefore lies in combination rather than substitution.[5][15]

At the same time, hybrid pipelines increase the need for governance. Once models are productively deployed, data provenance, rights, transparency, and safety mechanisms must be considered to prevent speed from turning into reputational and compliance risks.[12][14]

This makes one thing clear: it is not about abolishing film production. It is about reconfiguring it. What was described in theory as a structural shift can be observed in the following video as a pointed example.[16]

Video Analysis – From the Million-Dollar Scene to AI Iteration

The following video addresses a provocative claim: a visually elaborate Hollywood racing scene worth several million US dollars was reproduced with artificial intelligence for just a few cents. Regardless of the exact figure, this juxtaposition points to a structural shift in the production economics of audiovisual content.[1][17]

For decades, high-speed sequences required enormous budgets, complex logistics, specialized teams, and months of coordination. Generative video models increasingly condense this process chain into compute power, model architecture, and iteration capability. This shift affects not only cost structures, but also the logic of value creation, intellectual property, and creative control.[9]

The decisive question is therefore not whether artificial intelligence can imitate cinematic reality. The decisive question is how production chains, rights architectures, and creative roles are reconfigured once models themselves become productive actors.

Programmable Cinema – Generative reconstruction of a high-speed sequence

Video: el.cine | Source: Today in AI (Instagram) |

Analytical classification: Ulrich Buckenlei

This example stands as a representative case for the transition from capital-intensive film production to model-based iteration. It does not mark the end of filmmaking, but the beginning of a programmable production logic.

The final chapter derives a strategic perspective from this development, for studios, platforms, and creators.

Sources and References

- OpenAI, “Video Generation Models and Diffusion Architectures”, Technical Overview of Text-to-Video Systems, 2024–2025. [1]

- Google DeepMind, “Scalable Video Generation with Diffusion Transformers”, Research Paper, 2024. [2]

- Runway Research, Technical Documentation on Gen-2 and Gen-3 Text-to-Video Models, 2023–2025. [3]

- Meta AI, “Emu Video and Multimodal Generative Models”, 2024. [4]

- Journal of Media Economics, Studies on Cost Structures in Film and Digital Production, 2022–2025. [5]

- MIT Technology Review, Analyses on Generative AI in Media Production, 2024–2026. [6]

- ACM SIGGRAPH Proceedings, Papers on Neural Rendering and Real-Time Simulation Pipelines, 2023–2025. [7]

- Nature Machine Intelligence, Reviews on Diffusion Models and Generative Architectures, 2023–2025. [8]

- Harvard Business Review, “When Technology Shifts the Competitive Bottleneck”, 2024. [9]

- European Audiovisual Observatory, Reports on Film Industry Economics and Production Financing, 2023–2025. [10]

- IEEE Transactions on Visualization and Computer Graphics, Research on Model-Based Rendering and Computational Cinematography, 2022–2025. [11]

- Stanford HAI, Policy Briefs on Generative AI in Creative Industries, 2024–2026. [12]

- European Union AI Act, Regulatory Framework for High-Risk AI Systems, 2024. [13]

- World Intellectual Property Organization, Reports on AI-Generated Works and IP Implications, 2023–2025. [14]

- McKinsey & Company, “The Economic Potential of Generative AI in Media and Entertainment”, 2024. [15]

- MIT Technology Review, Analyses on generative AI in film and media production as well as on the public debate about production costs, 2024–2026. [16]

- Today in AI, Instagram post on the AI-generated racing scene, video by el.cine, 2026. [17]

When Film Production Becomes Programmable, It Requires More Than a Model

Programmable Cinema is not hype, but a new production logic. As soon as visual complexity is no longer primarily scaled through locations, crews, and equipment, but through models, parameter control, and compute power, processes, responsibilities, and cost structures change. The decisive question then is not whether AI can generate images, but how hybrid pipelines can be reliably planned, controlled, and translated into market-ready results.

For decision-makers, the evaluation basis shifts accordingly. Individual tools are not the critical success factor, but a clean end-to-end architecture: from analysis and goal definition through creative direction, data and model selection, proof of concept, production design, motion and compositing to quality control, rights management, and deployment across channels. Anyone seeking to leverage programmable film production needs a pipeline that combines creative precision with operational stability.

This is exactly where the Visoric expert team in Munich operates. We support companies in strategically positioning AI-supported film production, setting up hybrid processes efficiently, and delivering high-quality content without losing sight of brand standards, production readiness, and governance requirements.

The Visoric expert team: Ulrich Buckenlei and Nataliya Daniltseva discussing AI, XR, and scalable production architectures

Source: VISORIC GmbH | Munich

- Analysis and consulting → Evaluate use cases, cost logic, and feasibility

- Concept development → Story architecture, style definition, and prompt strategy

- Proof of concept → Rapid prototyping and quality comparison

- Hybrid production → Combine real capture and AI generation

- Execution → Animation, compositing, editing, and distribution

- Governance → Quality assurance, rights clarification, and pipeline standards

If you would like to explore how AI-generated and hybrid film processes can be implemented reliably, at high quality, and efficiently within your organization, a conversation with the Visoric expert team in Munich is worthwhile.

Contact Persons:

Ulrich Buckenlei (Creative Director)

Mobile: +49 152 53532871

Email: ulrich.buckenlei@visoric.com

Nataliya Daniltseva (Project Manager)

Mobile: +49 176 72805705

Email: nataliya.daniltseva@visoric.com

Address:

VISORIC GmbH

Bayerstraße 13

D-80335 Munich