Impressions from the Mobile World Congress 2026 in Barcelona

Photo: © Ulrich Buckenlei | Visoric GmbH

While the Mobile World Congress 2026 in Barcelona is still underway, a clear development is already emerging. Intelligent networks, Artificial Intelligence, and Extended Reality are converging into the core infrastructure of modern value creation.

Across the exhibition halls it becomes clear that the focus is no longer on individual products. What is visible instead is a structural shift. Systems are now designed to understand situations and act instantly. Intelligence is moving closer to where real events take place.

From Cloud Logic to Situational Intelligence at the Network Edge

For many years digitalization followed a clear technical logic. Data was captured locally, transferred to centralized data centers, and processed there. Intelligence was almost always located inside large cloud infrastructures. Decisions were calculated and then transmitted back to machines, systems, or applications.

This model worked well as long as data volumes were manageable and decisions did not need to be made in real time. However, with the rapid progress of Artificial Intelligence and the growing number of connected devices, this architecture is increasingly reaching its limits.

At the Mobile World Congress 2026 a clear transformation is becoming visible. More and more computing power is moving closer to where data is generated. Systems are being designed to analyze situations instantly and make decisions directly on site.

The technical background is evident. Models are becoming more efficient, hardware more powerful, and network infrastructures more stable. This opens new opportunities to operate intelligence not only centrally, but distributed across entire systems.

- Systems gain context awareness and situational understanding

- Decisions are executed directly at the point where data originates

- Dependencies on centralized infrastructure are reduced

AI Data Center infrastructure at the Mobile World Congress 2026 in Barcelona

Photo: © Ulrich Buckenlei | Visoric GmbH

Demonstrations of such systems can be observed across many booths at the Mobile World Congress. Manufacturers are presenting complete AI infrastructures specifically designed to operate modern AI applications. This is not only about raw computing power, but about the combination of specialized processors, high speed networking, energy efficient cooling, and optimized software architectures.

These systems form the foundation for a new generation of intelligent applications. Production facilities can monitor processes in real time, logistics systems respond dynamically to changes, and technical infrastructures automatically adapt to new situations.

This transformation therefore affects not just individual technologies but the entire architecture of digital systems. Intelligence becomes a distributed capability available wherever it is needed. This development defines many of the innovations presented at the Mobile World Congress 2026.

Embodied AI as a New Development Paradigm

One term stands out in many conversations at the Mobile World Congress 2026: Embodied AI. It describes systems that can perceive their environment, interpret it, and act autonomously. Intelligence is no longer hidden abstractly inside software models, but integrated into physical systems that interact with the real world.

While traditional Artificial Intelligence mainly analyzed data, Embodied AI expands this capability with perception and action. Sensors capture situations, models interpret the environment, and mechanical or digital systems immediately execute decisions. Intelligence is not only computed anymore, it becomes visible.

Technology exhibitions like the Mobile World Congress provide a particularly vivid illustration of this transformation. Humanoid robots, autonomous machines, and intelligent assistance systems demonstrate how AI is increasingly integrated into physical systems. Instead of merely processing data, these systems perform real tasks.

- Learning processes continue continuously

- Real and synthetic training data are combined

- Models improve directly during operation

Humanoid robot demonstrating calligraphy at a partner booth during Mobile World Congress 2026

Photo: © Ulrich Buckenlei | Visoric GmbH

These demonstrations highlight the fundamental difference compared to earlier AI systems. While many applications previously worked invisibly in the background, Embodied AI becomes visibly present. Machines can grasp objects, use tools, or, as shown in this demonstration, execute precise movement sequences.

Technically, this development is based on the combination of sensors, robotics, and powerful AI models. Cameras capture the environment, neural networks interpret visual information, and control systems translate these insights into movements. The result is systems that do not only analyze but also act.

For companies this represents a major shift in perspective. Artificial Intelligence is no longer viewed purely as a software solution, but as part of a new technological infrastructure. Perception, analysis, and action become integrated into a single system.

The Mobile World Congress 2026 clearly shows that AI is evolving from a pure analytics tool into an operational technology. Embodied AI will play a central role in robotics, industrial automation, logistics, and many other domains where intelligent systems interact directly with the physical world.

Distributed Learning as the Foundation of Scalable Systems

A central building block of this development is distributed learning. Insights are generated locally without transferring all raw data to centralized systems. This approach protects sensitive data while still enabling collective improvements.

Especially in regulated industries or environments with limited connectivity, this method opens new possibilities. Machines, sensors, and production systems can analyze data locally and learn from it, while only selected insights or model updates are shared. This creates a network of intelligent systems that improve together without losing control over data.

- Knowledge is shared while data often remains local

- Scaling becomes possible even for sensitive applications

- Systems become more resilient to disruptions

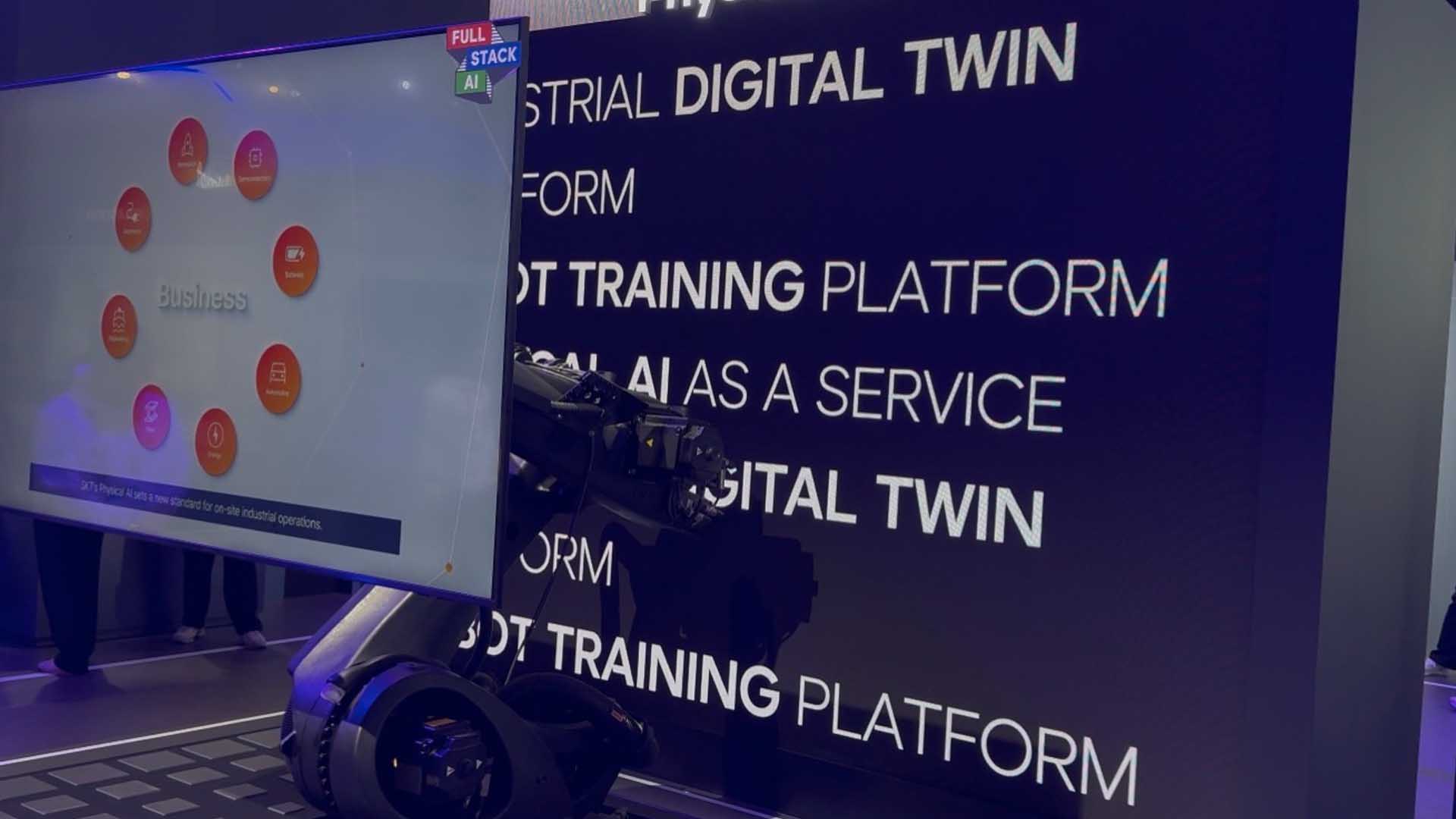

Industrial platforms for Digital Twins and robot training at the Mobile World Congress 2026

Photo: © Ulrich Buckenlei | Visoric GmbH

Such concepts can be observed across many booths at the Mobile World Congress 2026. Manufacturers are presenting platforms where industrial applications can be trained, simulated, and later transferred into real systems. The concept of the digital twin appears frequently in this context.

Digital twins make it possible to virtually replicate complex machines or entire production lines. Within this simulated environment, models can be trained, errors analyzed, and new workflows tested before they are implemented in the real world. This significantly reduces risks and accelerates the development of new applications.

Combined with distributed learning methods, this creates a powerful infrastructure. Systems learn locally from their experiences while overarching platforms consolidate and refine new insights. Robots, production systems, or autonomous devices can therefore continuously improve without retraining every system individually.

This combination of local intelligence and central coordination will play a crucial role in the coming years. It enables companies to scale AI not only in isolated applications but across entire infrastructures.

Step by step, a new form of industrial intelligence emerges: distributed, adaptive, and closely connected to real processes.

Extended Reality as the Interface of Intelligent Systems

As Artificial Intelligence increasingly makes decisions and systems become more autonomous, one key question arises: how do humans interact with these complex digital structures? This is where Extended Reality gains new importance.

XR technologies allow digital information to be visualized directly within spatial contexts. Data, simulations, or machine states are no longer displayed only on traditional screens, but appear as three dimensional visualizations in real space. Users can inspect, modify, and interact with digital models while remaining inside their real environment.

This type of representation fundamentally transforms the interaction between humans and digital infrastructure. Complex data becomes easier to understand, relationships become visible faster, and decisions can be made on a much stronger informational basis.

- Complex data is visualized spatially

- Digital twins can be experienced directly within work environments

- Humans and AI collaborate more closely

Hands on demonstration of the Samsung Galaxy XR headset (Project Moohan) at the Mobile World Congress 2026

Photo: © Ulrich Buckenlei | Visoric GmbH

In industrial environments these technologies open entirely new possibilities. Engineers can analyze digital twins of machines, visualize maintenance procedures, or simulate production processes. Complex technical systems become easier to understand and decisions can be made faster.

At the Mobile World Congress 2026 it also becomes clear that another XR ecosystem is emerging alongside Apple. Samsung and Google are collaborating on a new generation of devices based on the Android XR platform. The Samsung Galaxy XR headset, internally known as Project Moohan, is among the first devices built for this platform.

These devices are expected to serve not only entertainment purposes but also industrial applications, planning, training, and collaboration. XR is evolving into an interface through which humans interact with complex digital systems.

While Artificial Intelligence analyzes data and optimizes processes, Extended Reality translates this information into spatially understandable experiences. Users no longer just see data. They experience it within the context of their environment.

XR therefore increasingly becomes the operational interface of the next generation of digital infrastructure. This development is clearly visible across many demonstrations at the Mobile World Congress 2026.

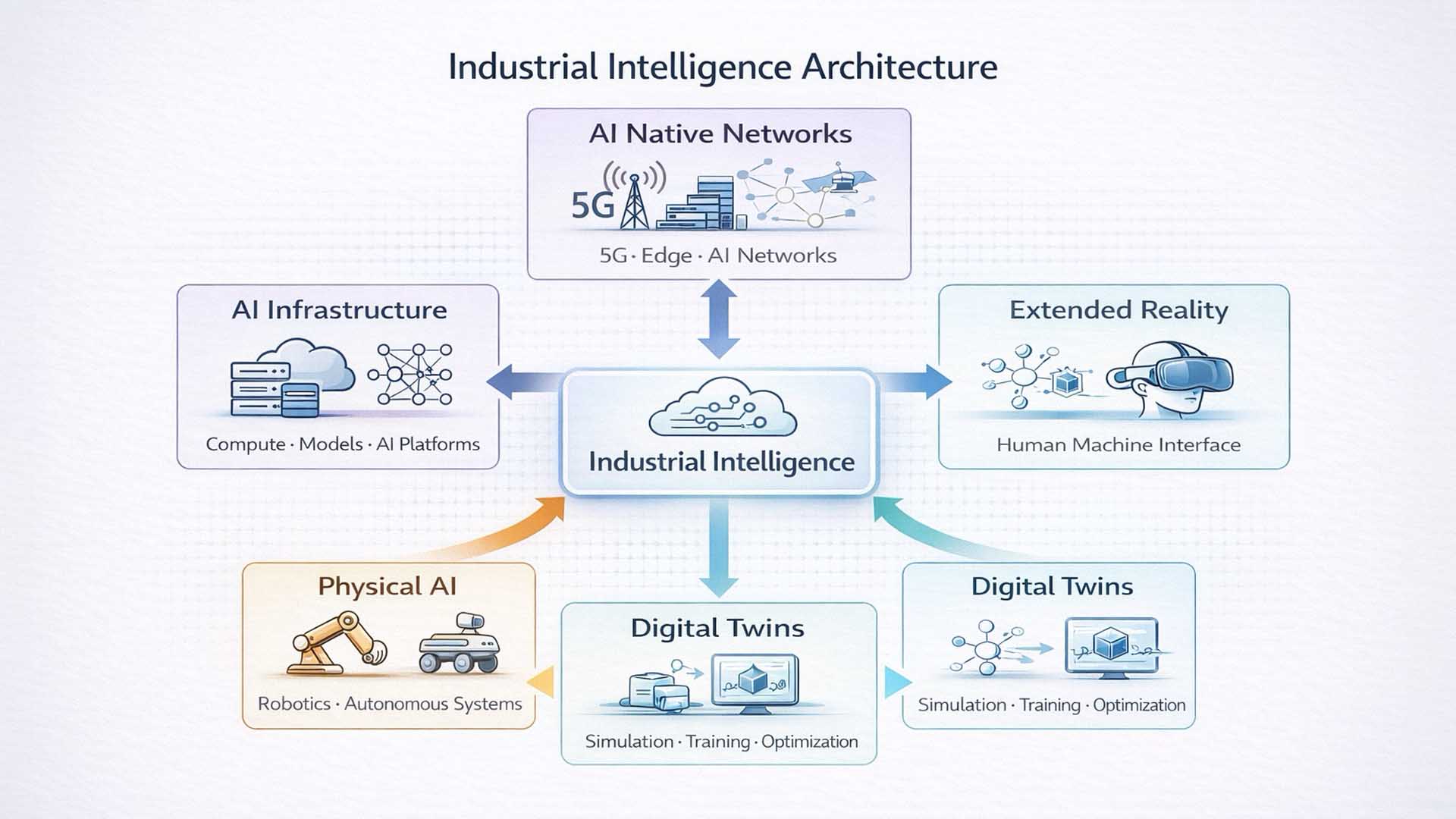

The Architecture of Industrial Intelligence

The Mobile World Congress 2026 reveals not just isolated technological innovations but a new systemic architecture. Artificial Intelligence is evolving from a standalone software capability into an infrastructure that connects physical systems, networks, and digital models.

The core idea can be described as industrial intelligence. It represents the interaction of several technological layers that together enable new forms of automation, simulation, and collaboration.

- AI Native Networks provide the communication infrastructure

- AI Infrastructure delivers computing power and models

- Physical AI connects artificial intelligence with real machines

- Digital Twins enable simulation and continuous training

- Extended Reality creates new interfaces between humans and systems

Industrial Intelligence Architecture – Technological convergence observed at Mobile World Congress 2026

Visualization: XR Stager Research / Visoric GmbH

At the center of this architecture lies industrial intelligence itself. It emerges from the interaction of several technological developments that become visible across many booths at the Mobile World Congress.

The foundation consists of AI Native Networks. Modern mobile networks are increasingly evolving into intelligent platforms that dynamically connect data, computing power, and applications. Technologies such as 5G, edge computing, and future 6G concepts create the infrastructure required for these distributed systems.

Building on this foundation, a new generation of AI infrastructures is emerging. High performance data centers, specialized chips, and scalable models enable the analysis of massive data volumes and the control of complex systems.

With Physical AI, artificial intelligence finally moves beyond the software layer. Robots, autonomous machines, and intelligent devices can perceive their surroundings, make decisions, and perform physical tasks.

Digital twins represent another central layer of this architecture. They make it possible to replicate real systems virtually, perform simulations, and continuously improve models. Machines, production facilities, and even entire infrastructures can therefore be planned and optimized with far greater precision.

Extended Reality ultimately forms the interface between humans and these complex systems. Spatial interfaces allow digital information to be displayed directly within real environments and enable intuitive interaction with it.

Together, these technologies form the foundation of a new generation of intelligent industrial systems. The Mobile World Congress 2026 makes it clear that this architecture is increasingly emerging from a growing ecosystem of technological innovations.

Video Impressions from the Mobile World Congress 2026

The Mobile World Congress in Barcelona is one of the most important meeting points for the global technology industry. Companies from telecommunications, artificial intelligence, robotics, and extended reality present their latest developments here and provide insights into upcoming industrial applications.

The event demonstrates how rapidly the technological landscape is currently evolving. Intelligent networks, edge computing, digital twins, and Physical AI are increasingly merging into a shared infrastructure. Systems are becoming not only more powerful but also more interconnected.

Concepts that often sound abstract in presentations become immediately tangible at the exhibition. Robots demonstrate learning capable systems, new XR interfaces enable spatial interaction with data, and modern network architectures reveal how computing power is moving closer to real world processes.

The following video shows personal impressions directly from the exhibition grounds. The footage captures the atmosphere, the technologies, and the international diversity of this event.

Impressions from the Mobile World Congress 2026 in Barcelona

Video footage: © Ulrich Buckenlei | XR Stager Newsroom

These impressions show that many developments that once seemed like future scenarios are now taking concrete shape. Networks are becoming more intelligent, machines more autonomous, and digital models more precise.

The Mobile World Congress therefore illustrates that a new technological infrastructure is currently emerging. It connects communication networks, artificial intelligence, physical systems, and immersive interfaces into an increasingly integrated digital reality.

The following section brings these developments together once again and places them in their industrial context.

From Trade Fair Signals to Strategic Business Decisions

The Mobile World Congress 2026 reveals a clear pattern. Artificial Intelligence is becoming infrastructure. Networks are evolving into intelligent platforms. Physical AI is moving AI into real machines. Digital twins are becoming environments for training and optimization. And Extended Reality is becoming the interface through which humans interact with complex systems.

For companies this creates a new type of strategic pressure. The question is no longer whether these technologies will emerge. The real question is which of these building blocks are relevant for the business, how they should interact, and how they can be integrated into robust operational processes rather than remaining demonstration effects.

Many organizations do not fail because of technology itself but because of interpretation. Which use cases are realistic. Which data and systems are required. What does a target architecture look like. How are security, governance, and responsibilities defined. And how does an idea seen at a trade fair evolve into a scalable operational system.

This is exactly where the Visoric expert team in Munich works. We help organizations translate the signals from events such as the Mobile World Congress into structured strategies, architecture concepts, and implementable roadmaps that work in real organizations.

The Visoric expert team from Munich supports companies from trade fair analysis to executable transformation roadmaps

Source: VISORIC GmbH | Munich

- MWC Trend Analysis → evaluation of relevant developments for your industry and business model

- Technology Assessment → evaluation of whether AI, XR, Digital Twins, or new platforms align with your strategic goals

- Use Case Design → identification of applications with measurable impact and clearly defined requirements

- Target Architecture → design of end to end systems across networks, edge computing, cloud infrastructure, data, and interfaces

- Pilot and Proof of Concept → rapid validation of technical and economic feasibility

- Workshops and Change Enablement → enabling informed decisions and anchoring implementation within the organization

If you want to translate the impressions from the Mobile World Congress into concrete next steps, a structured discussion is worthwhile. Together we can identify which technological building blocks are truly relevant for your company and how they can evolve into a practical roadmap.

Kontaktpersonen:

Ulrich Buckenlei (Kreativdirektor)

Mobil: +49 152 53532871

E-Mail: ulrich.buckenlei@visoric.com

Nataliya Daniltseva (Projektleiterin)

Mobil: + 49 176 72805705

E-Mail: nataliya.daniltseva@visoric.com

Adresse:

VISORIC GmbH

Bayerstraße 13

D-80335 München